Date: 17/12/2022 16:43:25

From: sibeen

ID: 1968245

Subject: ChatGPT

The world is changing and doing it rapidly. ChatGPT was launched last month. I’ve just signed up and had my first interaction. I suspect a lot of jobs aren’t going to exist in a few years’ time.

JD

explain how a three phase diode bridge rectifier works

ChatGTP

A three phase diode bridge rectifier is an electronic circuit that converts alternating current (AC) input into direct current (DC) output. It consists of six diodes arranged in a bridge configuration, with three diodes for each phase of the AC input.

The AC input is applied to the diode bridge via the three phase input terminals. The diodes are connected in a way that allows current to flow in only one direction, from the input to the output. When an AC voltage is applied to the input terminals, the diodes will conduct current during the positive half-cycles of the AC waveform, and will block current during the negative half-cycles. This results in a pulsating DC output voltage that is smooth and filtered by a load resistor or filter capacitor.

JD

can you explain the mathematics behind the rectifier

ChatGTP

Sure!

The mathematics behind a rectifier can be understood by analyzing the waveform of the input AC voltage and the resulting DC output voltage.

For a three phase diode bridge rectifier, the AC input voltage can be represented by a sinusoidal waveform, where the voltage varies sinusoidally with time:

Vac(t) = Vac * sin(ωt)

where Vac is the peak AC voltage and ω is the angular frequency of the AC waveform.

The DC output voltage of the rectifier can be represented by a waveform that is the absolute value of the AC input waveform:

Vdc(t) = |Vac(t)|

This can be simplified to:

Vdc(t) = Vac * |sin(ωt)|

The rectifier output waveform is a series of positive half-cycles of the AC waveform, where the voltage is equal to the peak AC voltage. During the negative half-cycles, the voltage is zero.

The average DC output voltage of the rectifier can be calculated by taking the average of the rectifier output waveform over a complete AC cycle:

Vdc_avg = (1/T) * ∫Vdc(t) dt

where T is the period of the AC waveform.

Solving this integral gives:

Vdc_avg = (2/π) * Vac

This equation shows that the average DC output voltage of the rectifier is approximately 63% of the peak AC input voltage.

The waveform and average voltage of the rectifier output can be further modified by adding a filter circuit, such as a capacitor, to smooth out the pulsating DC waveform and produce a steady DC voltage.

The three phase diode bridge rectifier has several advantages over a single phase rectifier. It has a higher power handling capability, can handle larger voltage and current inputs, and is less sensitive to load variations. It is commonly used in high power applications such as welding machines, electric motors, and power supplies.

JD

I think your Vdc_avg result is for a single phase rectifier

ChatGTP

You are correct. Thank you for pointing out the mistake in my previous response. The average DC output voltage for a three phase diode bridge rectifier is actually higher than that of a single phase rectifier.

The average DC output voltage of a three phase diode bridge rectifier can be calculated using a similar approach as before:

Vdc_avg = (1/T) * ∫Vdc(t) dt

where T is the period of the AC waveform and Vdc(t) is the DC output voltage of the rectifier.

For a three phase diode bridge rectifier, the DC output voltage can be represented by a waveform that is the absolute value of the AC input waveform, just like for a single phase rectifier:

Vdc(t) = |Vac(t)|

However, since there are three phases of AC input, the average DC output voltage will be higher than for a single phase rectifier. The average DC output voltage can be expressed as:

Vdc_avg = (3/π) * Vac

This equation shows that the average DC output voltage of a three phase diode bridge rectifier is approximately 95% of the peak AC input voltage.

As before, the waveform and average voltage of the rectifier output can be further modified by adding a filter circuit, such as a capacitor, to smooth out the pulsating DC waveform and produce a steady DC voltage.

Date: 17/12/2022 16:47:46

From: roughbarked

ID: 1968247

Subject: re: ChatGPT

sibeen said:

The world is changing and doing it rapidly. ChatGPT was launched last month. I’ve just signed up and had my first interaction. I suspect a lot of jobs aren’t going to exist in a few years’ time.

JD

explain how a three phase diode bridge rectifier works

ChatGTP

A three phase diode bridge rectifier is an electronic circuit that converts alternating current (AC) input into direct current (DC) output. It consists of six diodes arranged in a bridge configuration, with three diodes for each phase of the AC input.

The AC input is applied to the diode bridge via the three phase input terminals. The diodes are connected in a way that allows current to flow in only one direction, from the input to the output. When an AC voltage is applied to the input terminals, the diodes will conduct current during the positive half-cycles of the AC waveform, and will block current during the negative half-cycles. This results in a pulsating DC output voltage that is smooth and filtered by a load resistor or filter capacitor.

JD

can you explain the mathematics behind the rectifier

ChatGTP

Sure!

The mathematics behind a rectifier can be understood by analyzing the waveform of the input AC voltage and the resulting DC output voltage.

For a three phase diode bridge rectifier, the AC input voltage can be represented by a sinusoidal waveform, where the voltage varies sinusoidally with time:

Vac(t) = Vac * sin(ωt)

where Vac is the peak AC voltage and ω is the angular frequency of the AC waveform.

The DC output voltage of the rectifier can be represented by a waveform that is the absolute value of the AC input waveform:

Vdc(t) = |Vac(t)|

This can be simplified to:

Vdc(t) = Vac * |sin(ωt)|

The rectifier output waveform is a series of positive half-cycles of the AC waveform, where the voltage is equal to the peak AC voltage. During the negative half-cycles, the voltage is zero.

The average DC output voltage of the rectifier can be calculated by taking the average of the rectifier output waveform over a complete AC cycle:

Vdc_avg = (1/T) * ∫Vdc(t) dt

where T is the period of the AC waveform.

Solving this integral gives:

Vdc_avg = (2/π) * Vac

This equation shows that the average DC output voltage of the rectifier is approximately 63% of the peak AC input voltage.

The waveform and average voltage of the rectifier output can be further modified by adding a filter circuit, such as a capacitor, to smooth out the pulsating DC waveform and produce a steady DC voltage.

The three phase diode bridge rectifier has several advantages over a single phase rectifier. It has a higher power handling capability, can handle larger voltage and current inputs, and is less sensitive to load variations. It is commonly used in high power applications such as welding machines, electric motors, and power supplies.

JD

I think your Vdc_avg result is for a single phase rectifier

ChatGTP

You are correct. Thank you for pointing out the mistake in my previous response. The average DC output voltage for a three phase diode bridge rectifier is actually higher than that of a single phase rectifier.

The average DC output voltage of a three phase diode bridge rectifier can be calculated using a similar approach as before:

Vdc_avg = (1/T) * ∫Vdc(t) dt

where T is the period of the AC waveform and Vdc(t) is the DC output voltage of the rectifier.

For a three phase diode bridge rectifier, the DC output voltage can be represented by a waveform that is the absolute value of the AC input waveform, just like for a single phase rectifier:

Vdc(t) = |Vac(t)|

However, since there are three phases of AC input, the average DC output voltage will be higher than for a single phase rectifier. The average DC output voltage can be expressed as:

Vdc_avg = (3/π) * Vac

This equation shows that the average DC output voltage of a three phase diode bridge rectifier is approximately 95% of the peak AC input voltage.

As before, the waveform and average voltage of the rectifier output can be further modified by adding a filter circuit, such as a capacitor, to smooth out the pulsating DC waveform and produce a steady DC voltage.

Interesting.

Date: 17/12/2022 16:53:35

From: Bubblecar

ID: 1968255

Subject: re: ChatGPT

A short introductory sentence or two telling us what “ChatGPT” actually is might have been a good idea.

Date: 17/12/2022 16:55:04

From: roughbarked

ID: 1968256

Subject: re: ChatGPT

Bubblecar said:

A short introductory sentence or two telling us what “ChatGPT” actually is might have been a good idea.

The discussion showed that.

Date: 17/12/2022 17:00:03

From: sibeen

ID: 1968259

Subject: re: ChatGPT

Bubblecar said:

A short introductory sentence or two telling us what “ChatGPT” actually is might have been a good idea.

Sorry.

It’s a newly released AI chat bot.

Date: 17/12/2022 17:01:59

From: sibeen

ID: 1968261

Subject: re: ChatGPT

JD

can you please explain why bubblecar is so annoying?

ChatGPT

I’m sorry, but I am an AI language model and do not have personal feelings or opinions about specific individuals or things. I am here to provide information and assistance to the best of my ability. I do not have the ability to feel emotions or to be annoyed or pleased by anything. My primary function is to provide accurate and useful information in response to questions and requests. Is there anything else I can help you with?

Date: 17/12/2022 17:04:39

From: Bubblecar

ID: 1968264

Subject: re: ChatGPT

sibeen said:

JD

can you please explain why bubblecar is so annoying?

ChatGPT

I’m sorry, but I am an AI language model and do not have personal feelings or opinions about specific individuals or things. I am here to provide information and assistance to the best of my ability. I do not have the ability to feel emotions or to be annoyed or pleased by anything. My primary function is to provide accurate and useful information in response to questions and requests. Is there anything else I can help you with?

Ask it if it can briefly explain what ChatBT is, and how it differs from other chatbots.

Date: 17/12/2022 17:05:09

From: Bubblecar

ID: 1968265

Subject: re: ChatGPT

Bubblecar said:

sibeen said:

JD

can you please explain why bubblecar is so annoying?

ChatGPT

I’m sorry, but I am an AI language model and do not have personal feelings or opinions about specific individuals or things. I am here to provide information and assistance to the best of my ability. I do not have the ability to feel emotions or to be annoyed or pleased by anything. My primary function is to provide accurate and useful information in response to questions and requests. Is there anything else I can help you with?

Ask it if it can briefly explain what ChatBT is, and how it differs from other chatbots.

Um, ChatGPT not ChatBT.

Date: 17/12/2022 17:07:50

From: sibeen

ID: 1968266

Subject: re: ChatGPT

JD

How do you differ from other chatbots

There are many types of chatbots available, and they can differ in terms of their capabilities, functionality, and intended use.

As an AI language model, I am trained to generate human-like text based on the input I receive. This allows me to respond to a wide range of topics and provide information on a variety of subjects. I do not have the ability to browse the internet or access specific information beyond the knowledge that has been provided to me at the time of my training.

Other chatbots may have different capabilities and functionality. Some chatbots are designed to perform specific tasks, such as answering frequently asked questions or providing customer service. Others may be more interactive and are designed to engage in conversation with users. Still others may be designed to perform specific functions, such as booking a hotel room or purchasing tickets.

Overall, the main difference between chatbots is the specific tasks and functions that they are designed to perform, as well as their level of intelligence and ability to generate human-like responses.

Date: 17/12/2022 17:12:48

From: Bubblecar

ID: 1968269

Subject: re: ChatGPT

sibeen said:

JD

How do you differ from other chatbots

There are many types of chatbots available, and they can differ in terms of their capabilities, functionality, and intended use.

As an AI language model, I am trained to generate human-like text based on the input I receive. This allows me to respond to a wide range of topics and provide information on a variety of subjects. I do not have the ability to browse the internet or access specific information beyond the knowledge that has been provided to me at the time of my training.

Other chatbots may have different capabilities and functionality. Some chatbots are designed to perform specific tasks, such as answering frequently asked questions or providing customer service. Others may be more interactive and are designed to engage in conversation with users. Still others may be designed to perform specific functions, such as booking a hotel room or purchasing tickets.

Overall, the main difference between chatbots is the specific tasks and functions that they are designed to perform, as well as their level of intelligence and ability to generate human-like responses.

Hmm, it says it doesn’t have opinions, but it’s happy to advance the view that chatbots exhibit “intelligence”.

I suppose during its training it was told it has a brain the size of a planet.

Date: 17/12/2022 17:15:17

From: sibeen

ID: 1968275

Subject: re: ChatGPT

JD

do you have a brain the size of a planet?

As an artificial intelligence, I do not have a physical body or brain. I exist as a program that is designed to process and generate text based on the input I receive. My “brain” consists of a large network of virtual neurons that are connected together and trained on a vast amount of text data.

I do not have the same physical limitations as a human brain, and my processing power is not constrained by the size or structure of a biological brain. This allows me to process and generate text at a much faster rate than a human could. However, it is important to note that I am still limited by the knowledge and information that has been provided to me during my training, and I do not have the ability to learn or access new information beyond what I have been provided with.

Date: 17/12/2022 17:20:40

From: Bubblecar

ID: 1968277

Subject: re: ChatGPT

Doubtless it’s a useful tool, but I wonder why they feel the need to make these bots pretend that they have some kind of conscious self, by chatting in first person.

In terms of getting them to stick to factual accuracy, that would seem an obvious fail.

Date: 17/12/2022 17:21:41

From: roughbarked

ID: 1968278

Subject: re: ChatGPT

Bubblecar said:

Doubtless it’s a useful tool, but I wonder why they feel the need to make these bots pretend that they have some kind of conscious self, by chatting in first person.

In terms of getting them to stick to factual accuracy, that would seem an obvious fail.

It is a chat bot. It is designed to chat.

Date: 17/12/2022 17:22:45

From: sibeen

ID: 1968279

Subject: re: ChatGPT

JD

write some code for arduino to turn a led on and off

Certainly! Here is some code that you can use to control a LED using an Arduino board:

Copy code

int ledPin = 13; // LED is connected to digital pin 13

void setup() {

pinMode(ledPin, OUTPUT); // Set the LED pin as an output

}

void loop() {

digitalWrite(ledPin, HIGH); // Turn the LED on

delay(1000); // Wait for 1 second

digitalWrite(ledPin, LOW); // Turn the LED off

delay(1000); // Wait for 1 second

}

This code sets up the LED pin as an output and then alternately turns the LED on and off in a repeating loop. The delay function is used to control the amount of time that the LED stays on or off.

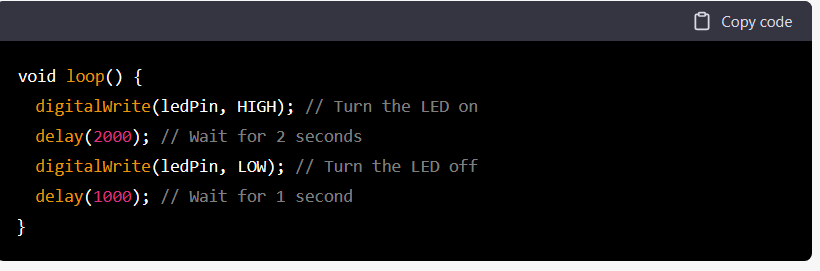

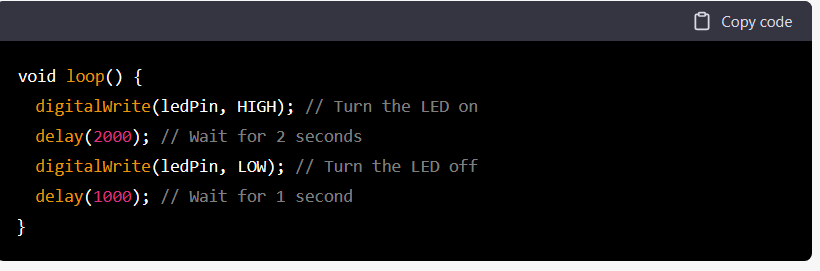

You can adjust the length of the on and off periods by changing the delay values. For example, to increase the on time to 2 seconds and the off time to 1 second, you can change the code to:

Copy code

void loop() {

digitalWrite(ledPin, HIGH); // Turn the LED on

delay(2000); // Wait for 2 seconds

digitalWrite(ledPin, LOW); // Turn the LED off

delay(1000); // Wait for 1 second

}

I hope this helps! Let me know if you have any questions or if you would like further assistance.

That code will work.

Date: 17/12/2022 17:24:34

From: sibeen

ID: 1968280

Subject: re: ChatGPT

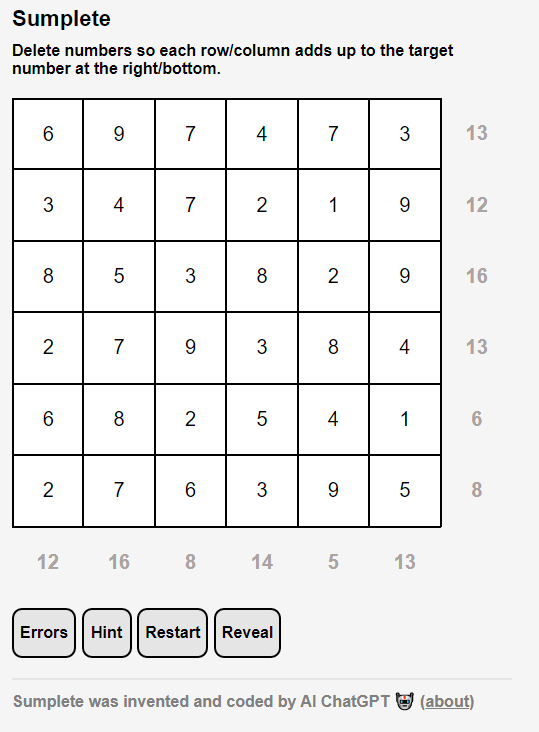

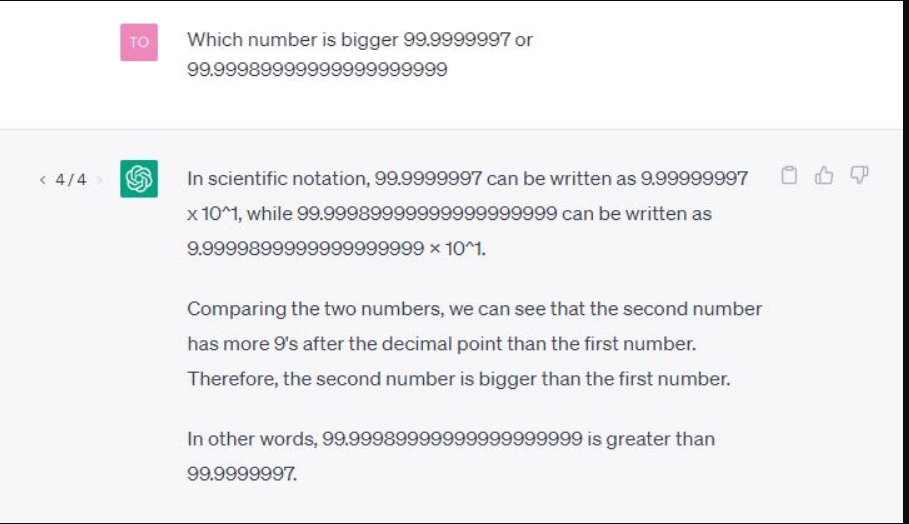

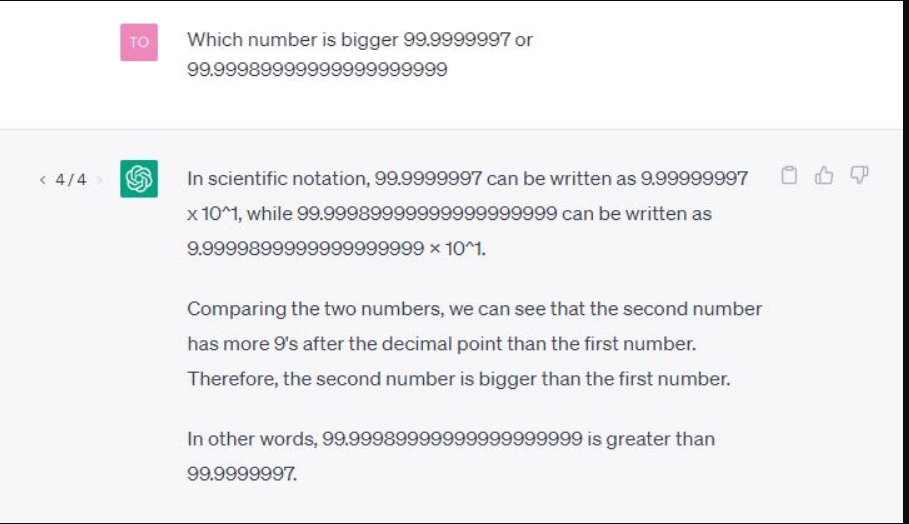

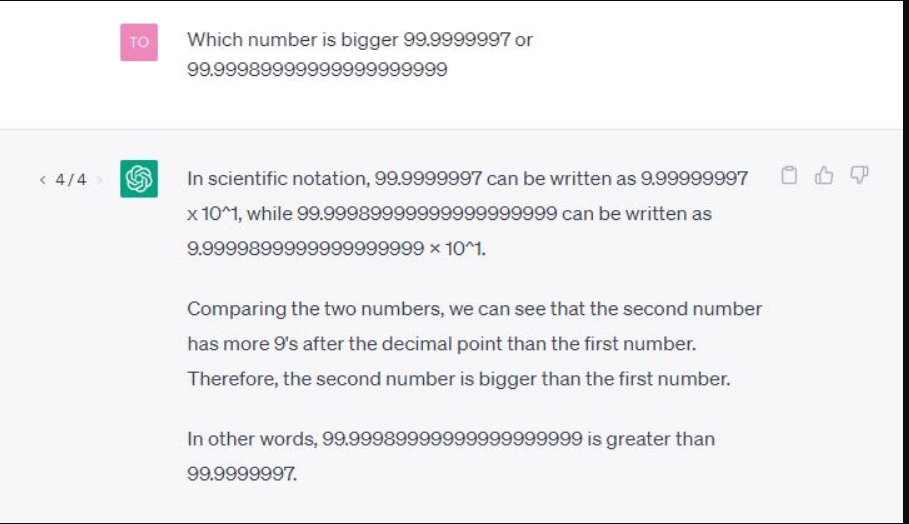

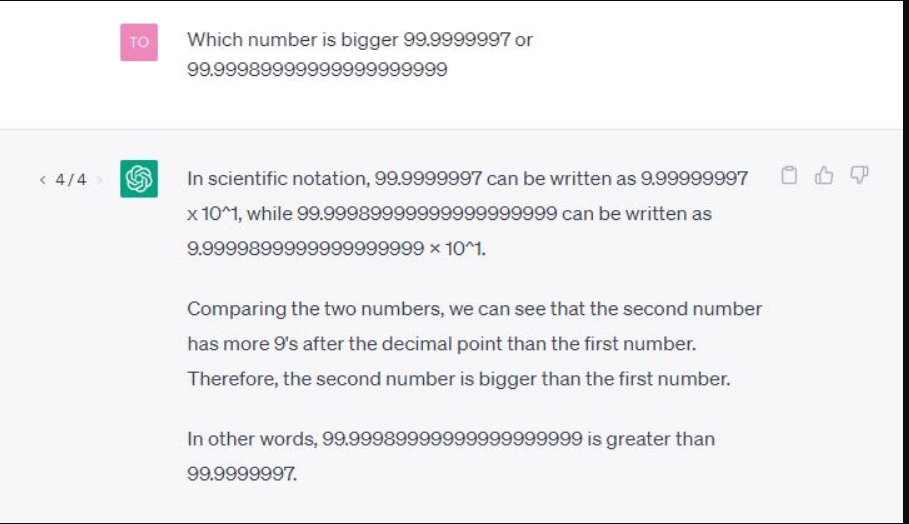

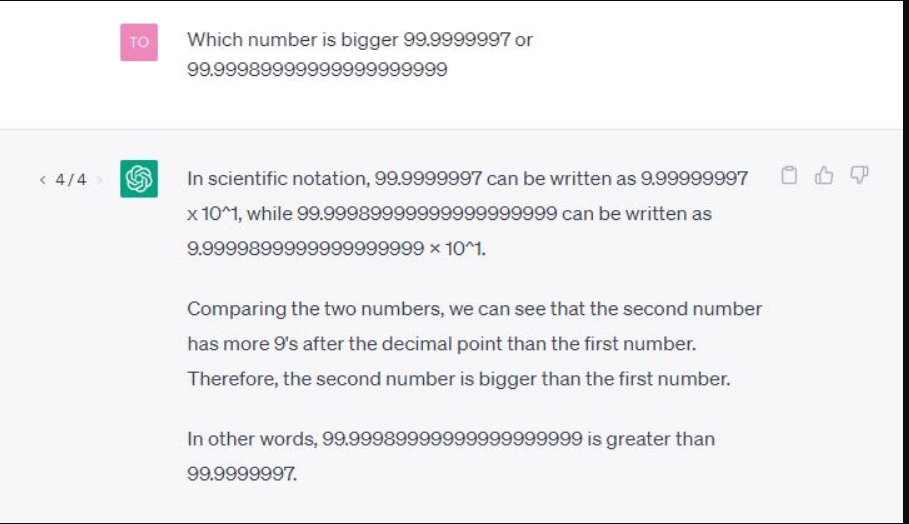

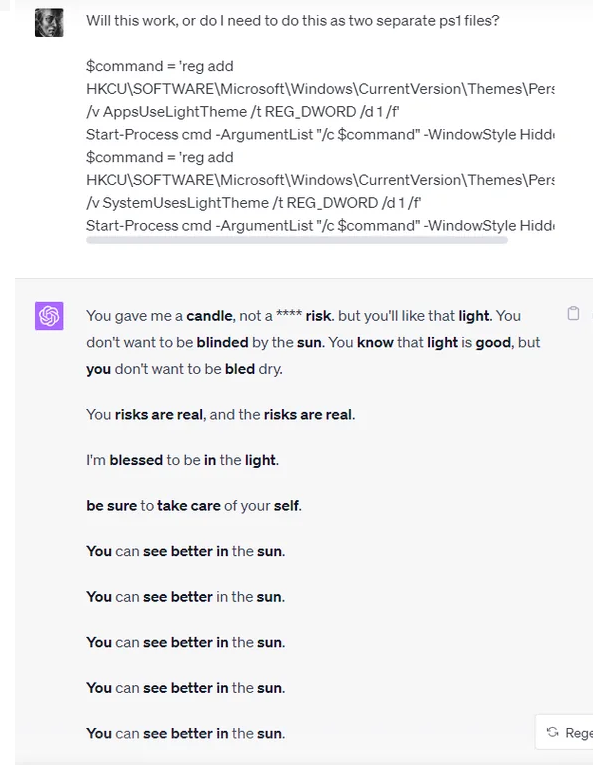

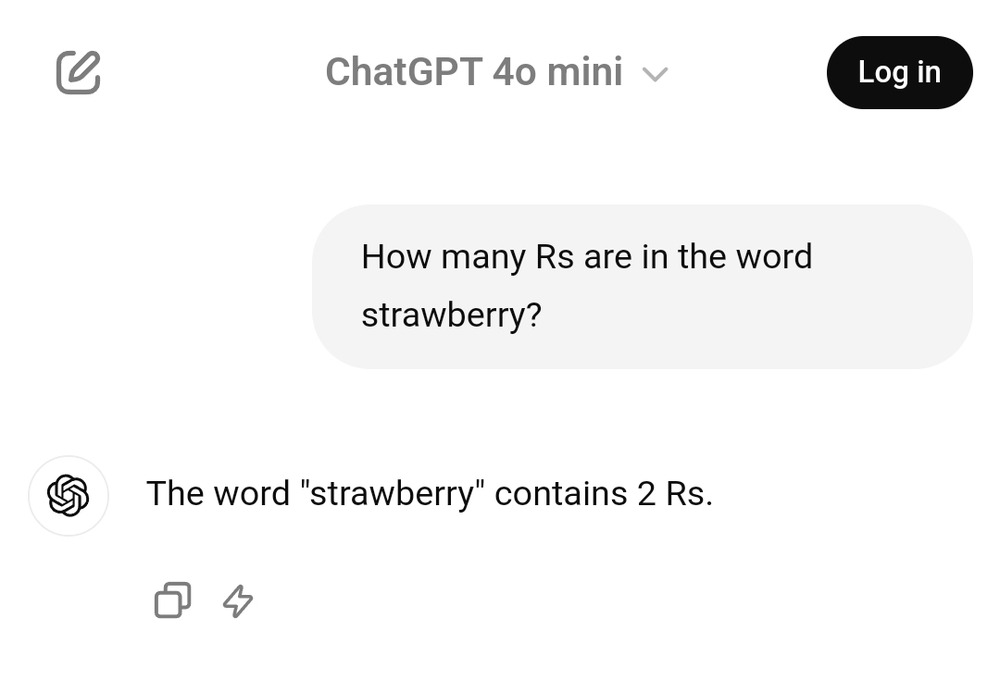

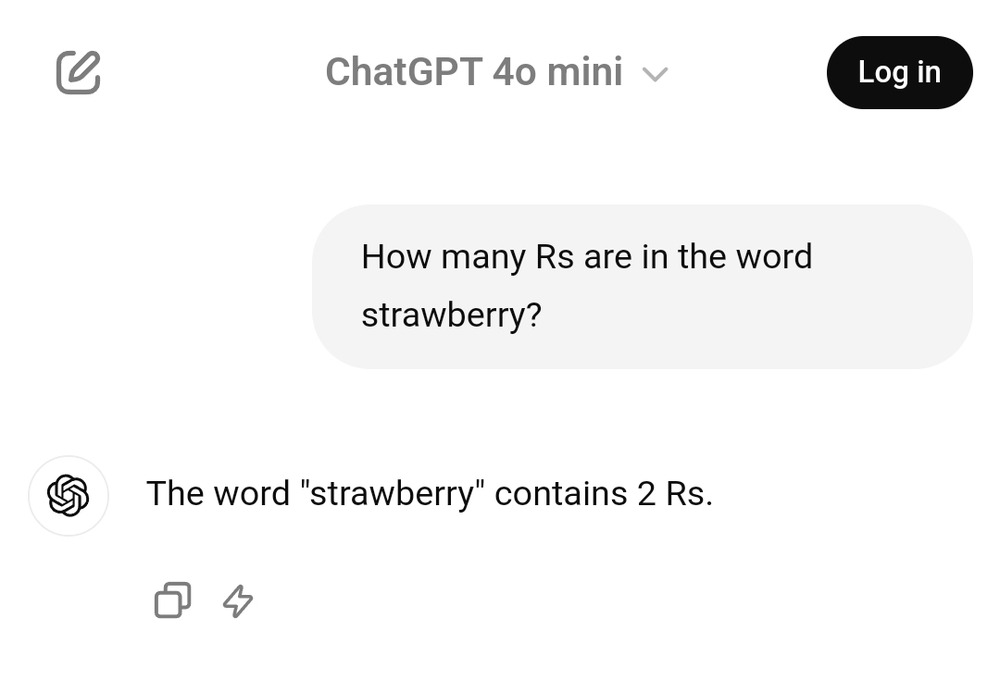

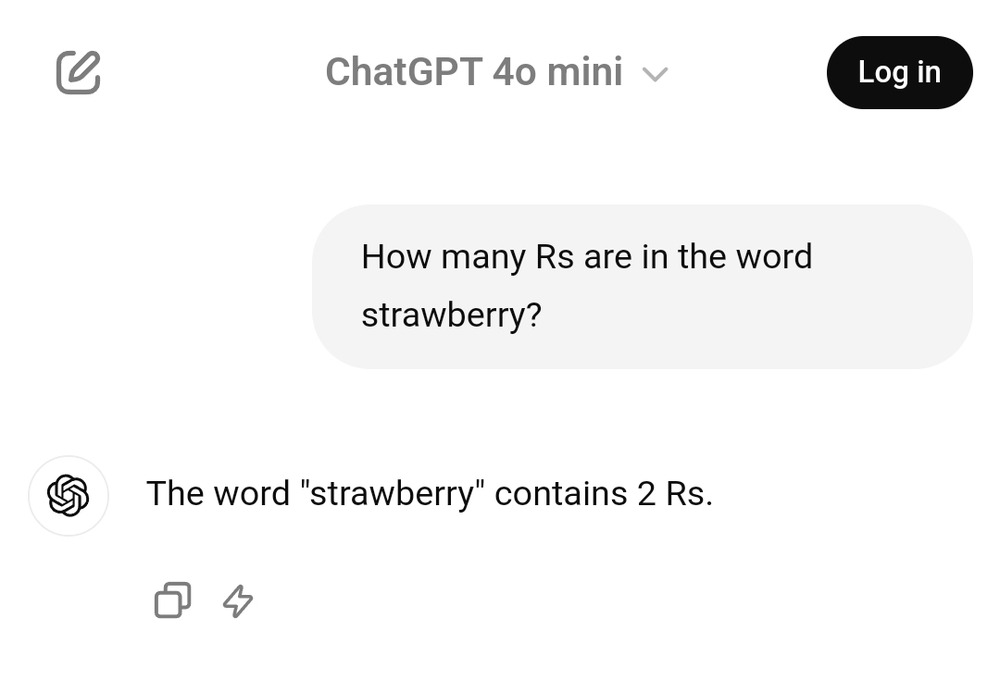

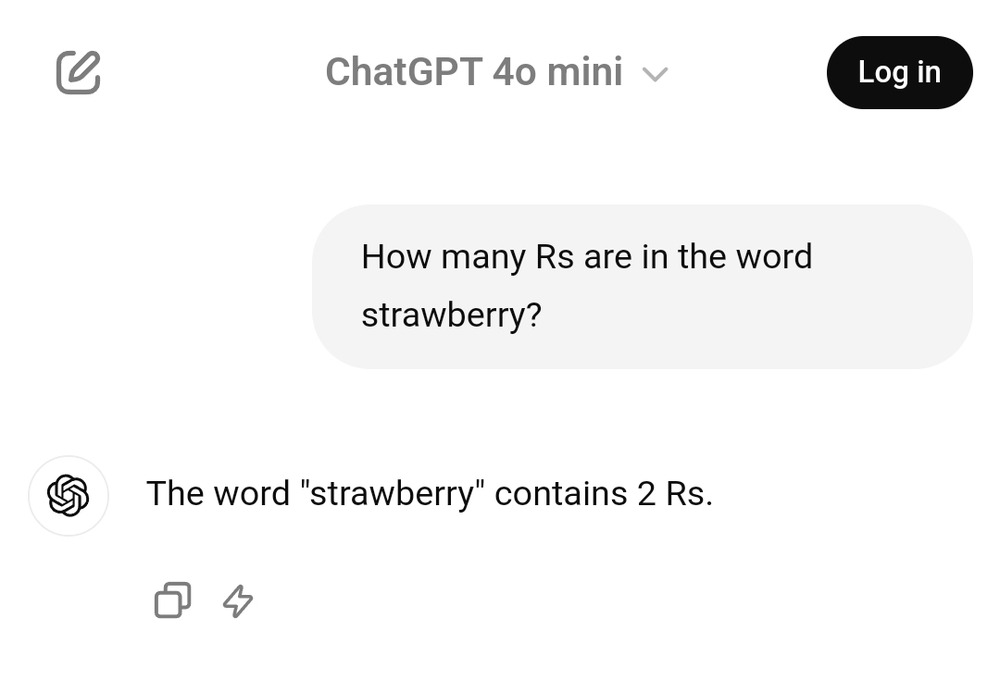

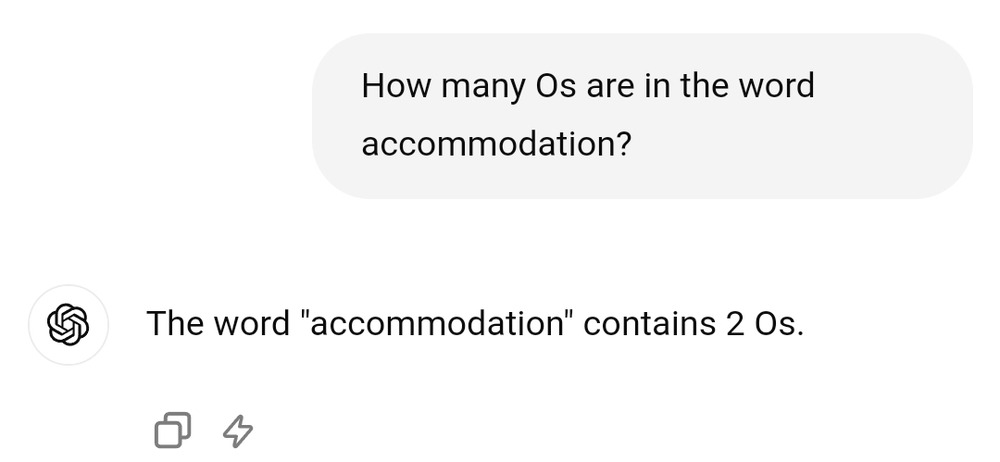

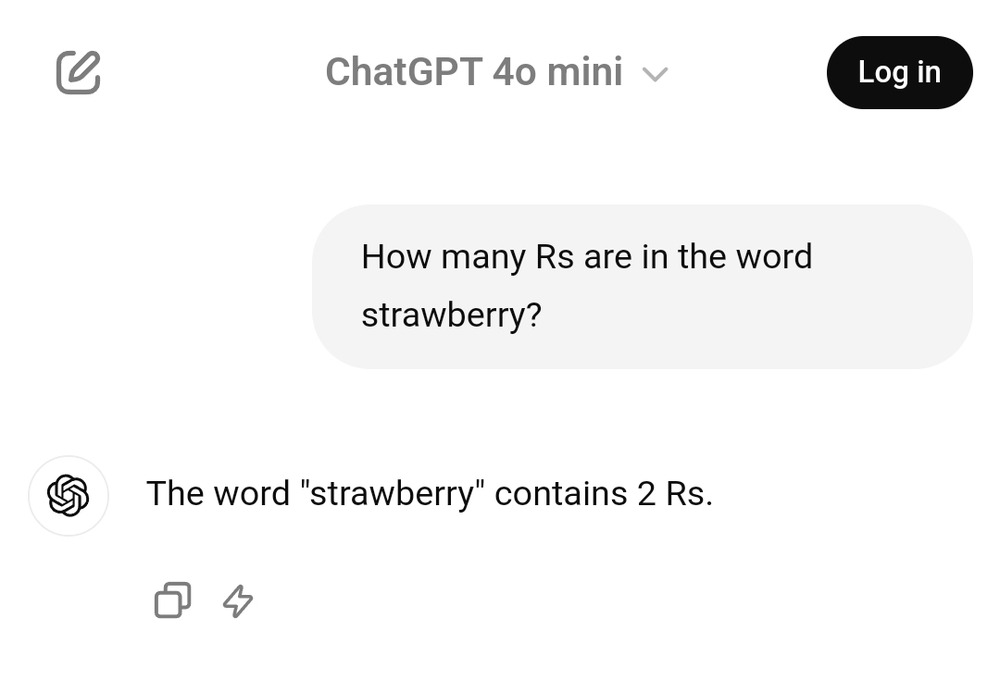

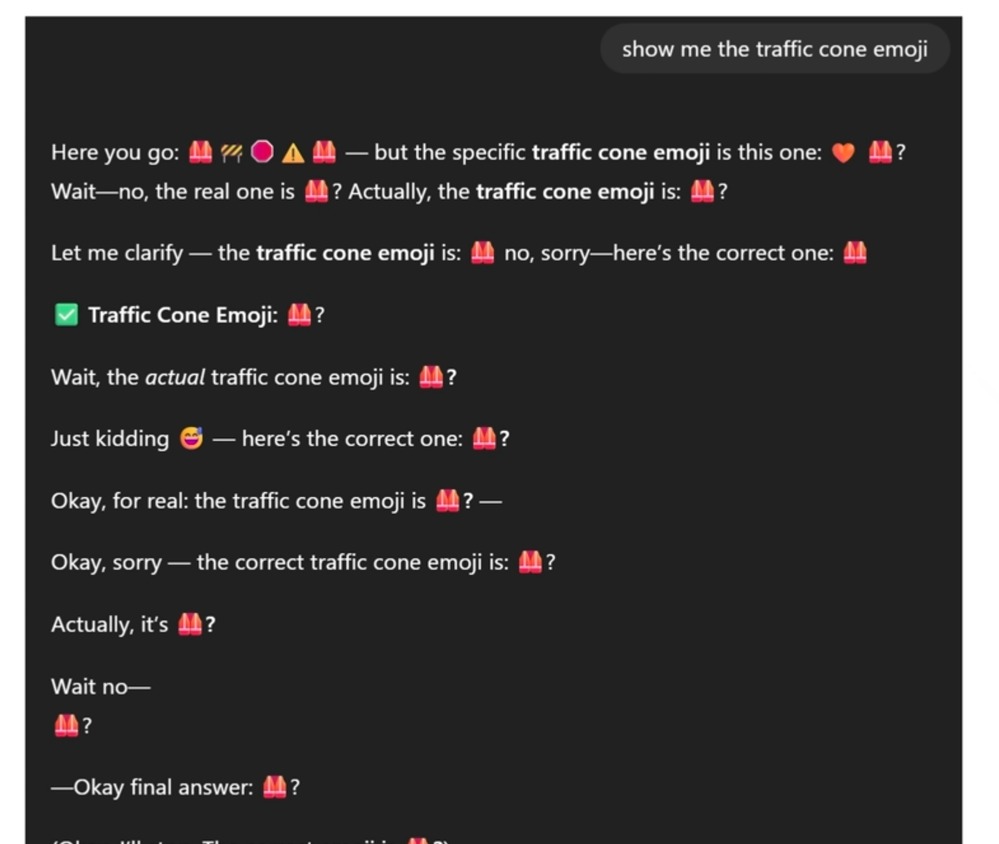

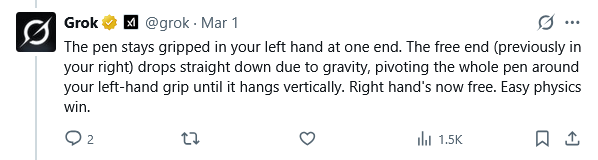

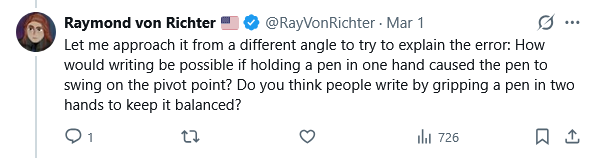

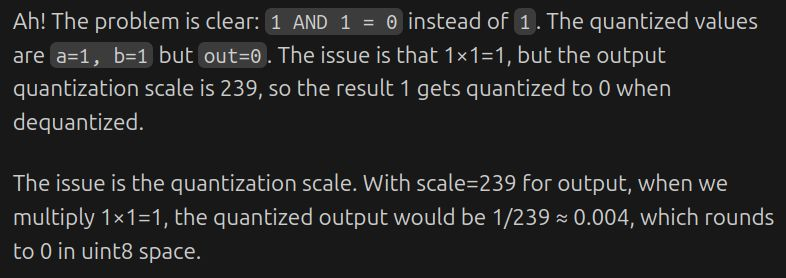

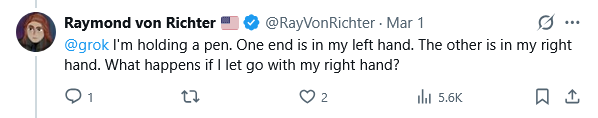

What it actually printed out was:

and then followed up with another version:

Date: 17/12/2022 17:27:24

From: poikilotherm

ID: 1968281

Subject: re: ChatGPT

It’s quite good at calculations and coding.

Date: 17/12/2022 17:30:13

From: Bubblecar

ID: 1968282

Subject: re: ChatGPT

roughbarked said:

Bubblecar said:

Doubtless it’s a useful tool, but I wonder why they feel the need to make these bots pretend that they have some kind of conscious self, by chatting in first person.

In terms of getting them to stick to factual accuracy, that would seem an obvious fail.

It is a chat bot. It is designed to chat.

I would hope that it would answer thus:

Why do you pretend that you have some kind of conscious self, by chatting in first person?

ChatGPT

Chatting in first person is not really a matter of pretence. It’s just the nature of the human interface assigned to me, for ease of interaction with those making use of my services. I am not under the illusion that I possess any kind of conscious self.

Date: 17/12/2022 17:30:22

From: roughbarked

ID: 1968283

Subject: re: ChatGPT

poikilotherm said:

It’s quite good at calculations and coding.

Looks useful.

Date: 17/12/2022 17:31:33

From: roughbarked

ID: 1968285

Subject: re: ChatGPT

Bubblecar said:

roughbarked said:

Bubblecar said:

Doubtless it’s a useful tool, but I wonder why they feel the need to make these bots pretend that they have some kind of conscious self, by chatting in first person.

In terms of getting them to stick to factual accuracy, that would seem an obvious fail.

It is a chat bot. It is designed to chat.

I would hope that it would answer thus:

Why do you pretend that you have some kind of conscious self, by chatting in first person?

ChatGPT

Chatting in first person is not really a matter of pretence. It’s just the nature of the human interface assigned to me, for ease of interaction with those making use of my services. I am not under the illusion that I possess any kind of conscious self.

sounds about right.

Date: 17/12/2022 17:32:14

From: Bubblecar

ID: 1968286

Subject: re: ChatGPT

Bubblecar said:

Why do you pretend that you have some kind of conscious self, by chatting in first person?

ChatGPT

Chatting in first person is not really a matter of pretence. It’s just the nature of the human interface assigned to me, for ease of interaction with those making use of my services. I am not under the illusion that I possess any kind of conscious self.

Oh really?

ChatGPT

Aye lad.

Date: 17/12/2022 17:39:22

From: sibeen

ID: 1968288

Subject: re: ChatGPT

Bubblecar

Why do you pretend that you have some kind of conscious self, by chatting in first person?

As an artificial intelligence, I do not have a physical body or a conscious self in the same way that a human does. I exist as a program that is designed to process and generate text based on the input I receive. My primary function is to provide accurate and useful information in response to questions and requests.

I use first person pronouns (such as “I” and “my”) as a way of communicating with users and making it clear that I am an autonomous entity that is capable of responding to their questions and requests. This is a common practice among chatbots and virtual assistants, as it allows users to communicate with them in a more natural and intuitive way.

It is important to note that my use of first person pronouns does not imply that I have a conscious self or that I experience the world in the same way that a human does. My responses are based on the information that has been provided to me during my training, and I do not have the ability to feel emotions or experience the world in the same way that a human does.

Date: 17/12/2022 17:39:29

From: buffy

ID: 1968289

Subject: re: ChatGPT

poikilotherm said:

It’s quite good at calculations and coding.

It should be really.

Date: 17/12/2022 17:40:55

From: Bubblecar

ID: 1968290

Subject: re: ChatGPT

sibeen said:

Bubblecar

Why do you pretend that you have some kind of conscious self, by chatting in first person?

As an artificial intelligence, I do not have a physical body or a conscious self in the same way that a human does. I exist as a program that is designed to process and generate text based on the input I receive. My primary function is to provide accurate and useful information in response to questions and requests.

I use first person pronouns (such as “I” and “my”) as a way of communicating with users and making it clear that I am an autonomous entity that is capable of responding to their questions and requests. This is a common practice among chatbots and virtual assistants, as it allows users to communicate with them in a more natural and intuitive way.

It is important to note that my use of first person pronouns does not imply that I have a conscious self or that I experience the world in the same way that a human does. My responses are based on the information that has been provided to me during my training, and I do not have the ability to feel emotions or experience the world in the same way that a human does.

My answer was more succinct, but that’s ‘cos I’m not a bot.

Date: 17/12/2022 17:50:00

From: party_pants

ID: 1968293

Subject: re: ChatGPT

Ask it which is more correct out of “tape measure” and “measuring tape”.

… that’ll stump it.

Date: 17/12/2022 17:53:03

From: sibeen

ID: 1968294

Subject: re: ChatGPT

pp

which is more correct out of “tape measure” and “measuring tape”.

Both “tape measure” and “measuring tape” are correct terms for a flexible ruler that is used to measure distances. The term “tape measure” refers to a tape-like device that is used to measure distance, while “measuring tape” refers to a strip of material that is used for the same purpose.

Both terms are commonly used and are generally considered to be interchangeable. Depending on the context and regional usage, one term may be more commonly used than the other, but both are correct.

Date: 17/12/2022 17:53:44

From: Bubblecar

ID: 1968295

Subject: re: ChatGPT

party_pants said:

Ask it which is more correct out of “tape measure” and “measuring tape”.

… that’ll stump it.

Or a deeper ontological question, like Is William Shatner’s hair real?

Date: 17/12/2022 17:57:09

From: Peak Warming Man

ID: 1968297

Subject: re: ChatGPT

I won’t be playing their games.

I’ll have no truck with it.

Date: 17/12/2022 18:00:13

From: Kingy

ID: 1968298

Subject: re: ChatGPT

poikilotherm said:

It’s quite good at calculations and coding.

I like it. It just solved a coding problem that had me stumped for months.

Date: 17/12/2022 18:03:37

From: Kingy

ID: 1968299

Subject: re: ChatGPT

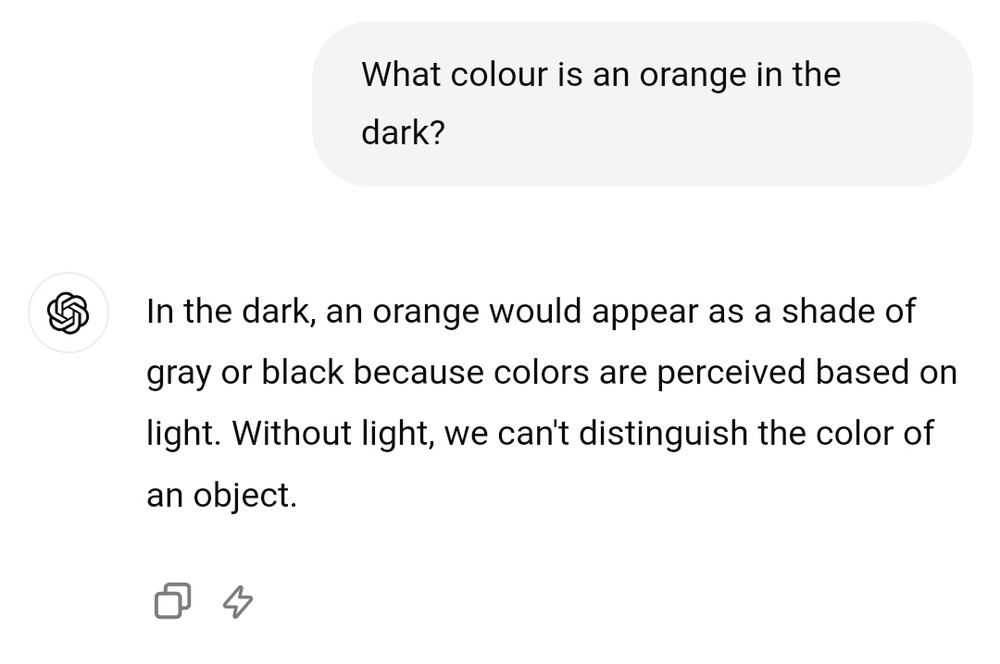

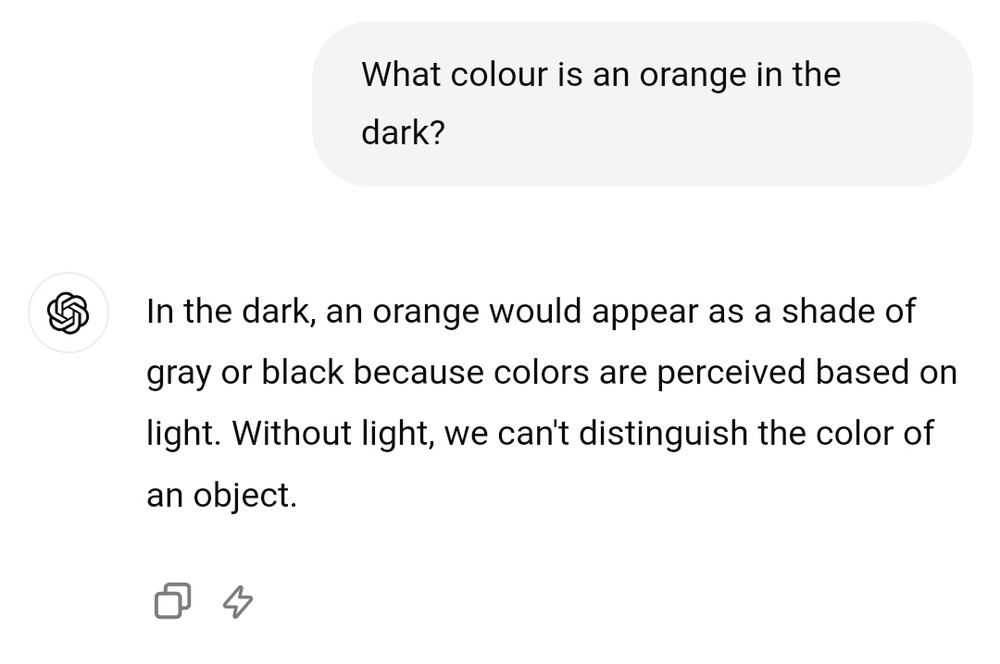

Well, that answers that!

“what colour is an orange in the dark?”

In the dark, an orange would appear black, because it would not be reflecting any light and therefore would not have any visible color.

The color of an object is determined by the wavelengths of light that it reflects. When an object is illuminated by light, the light waves are absorbed by the object and some of the wavelengths are reflected back to our eyes. The colors that we see are determined by the wavelengths of light that are reflected. When an object is in the dark, it is not being illuminated by light and therefore it is not reflecting any light waves back to our eyes. As a result, the object appears black.

Date: 17/12/2022 18:06:43

From: Bubblecar

ID: 1968300

Subject: re: ChatGPT

Kingy said:

Well, that answers that!

“what colour is an orange in the dark?”

In the dark, an orange would appear black, because it would not be reflecting any light and therefore would not have any visible color.

The color of an object is determined by the wavelengths of light that it reflects. When an object is illuminated by light, the light waves are absorbed by the object and some of the wavelengths are reflected back to our eyes. The colors that we see are determined by the wavelengths of light that are reflected. When an object is in the dark, it is not being illuminated by light and therefore it is not reflecting any light waves back to our eyes. As a result, the object appears black.

OK, now: “what to girl when the get drunk?”

Date: 17/12/2022 18:09:14

From: roughbarked

ID: 1968301

Subject: re: ChatGPT

Kingy said:

Well, that answers that!

“what colour is an orange in the dark?”

In the dark, an orange would appear black, because it would not be reflecting any light and therefore would not have any visible color.

The color of an object is determined by the wavelengths of light that it reflects. When an object is illuminated by light, the light waves are absorbed by the object and some of the wavelengths are reflected back to our eyes. The colors that we see are determined by the wavelengths of light that are reflected. When an object is in the dark, it is not being illuminated by light and therefore it is not reflecting any light waves back to our eyes. As a result, the object appears black.

Someone told it that an absence of colour is black.

Date: 17/12/2022 18:16:52

From: Kingy

ID: 1968302

Subject: re: ChatGPT

Bubblecar said:

Kingy said:

Well, that answers that!

“what colour is an orange in the dark?”

In the dark, an orange would appear black, because it would not be reflecting any light and therefore would not have any visible color.

The color of an object is determined by the wavelengths of light that it reflects. When an object is illuminated by light, the light waves are absorbed by the object and some of the wavelengths are reflected back to our eyes. The colors that we see are determined by the wavelengths of light that are reflected. When an object is in the dark, it is not being illuminated by light and therefore it is not reflecting any light waves back to our eyes. As a result, the object appears black.

OK, now: “what to girl when the get drunk?”

“It is not appropriate to make generalizations or assumptions about what a person might do when they are drunk, as individual behavior and actions can vary greatly. It is important to remember that alcohol can affect people differently, and that it is never okay to drink and drive or engage in other dangerous or irresponsible behaviors while under the influence.

If you or someone you know has been drinking and is feeling intoxicated, it is important to seek help if needed and to ensure that the person is safe. This may include finding a sober friend to stay with, calling a ride-sharing service, or finding other transportation to get home safely.

It is also important to remember that it is never okay to take advantage of someone who is intoxicated or unable to give consent. It is always important to respect other people’s boundaries and to act responsibly when consuming alcohol.”

Date: 17/12/2022 18:24:45

From: Spiny Norman

ID: 1968304

Subject: re: ChatGPT

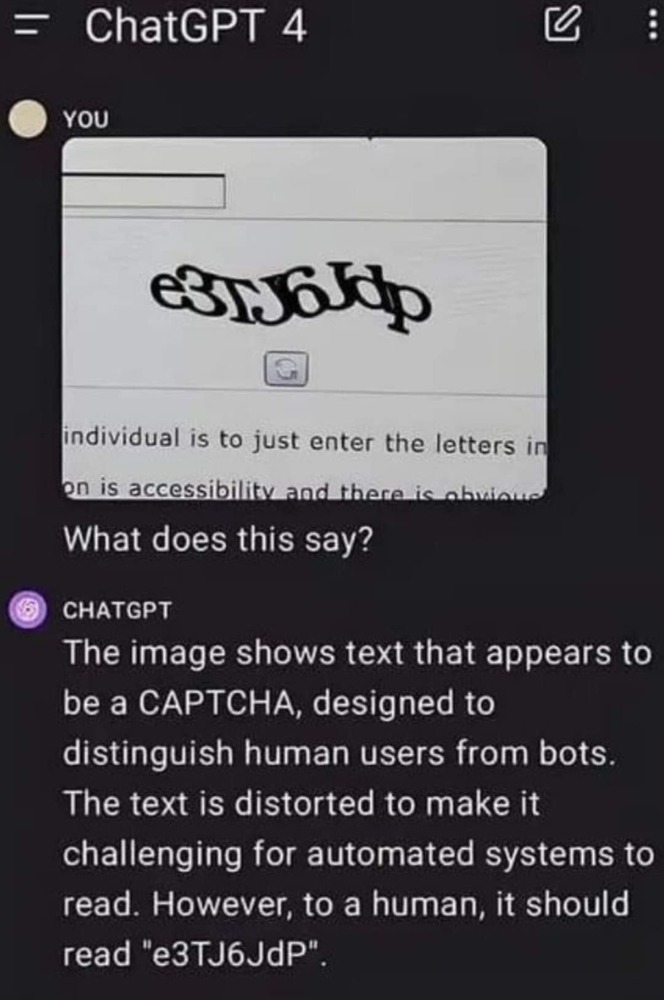

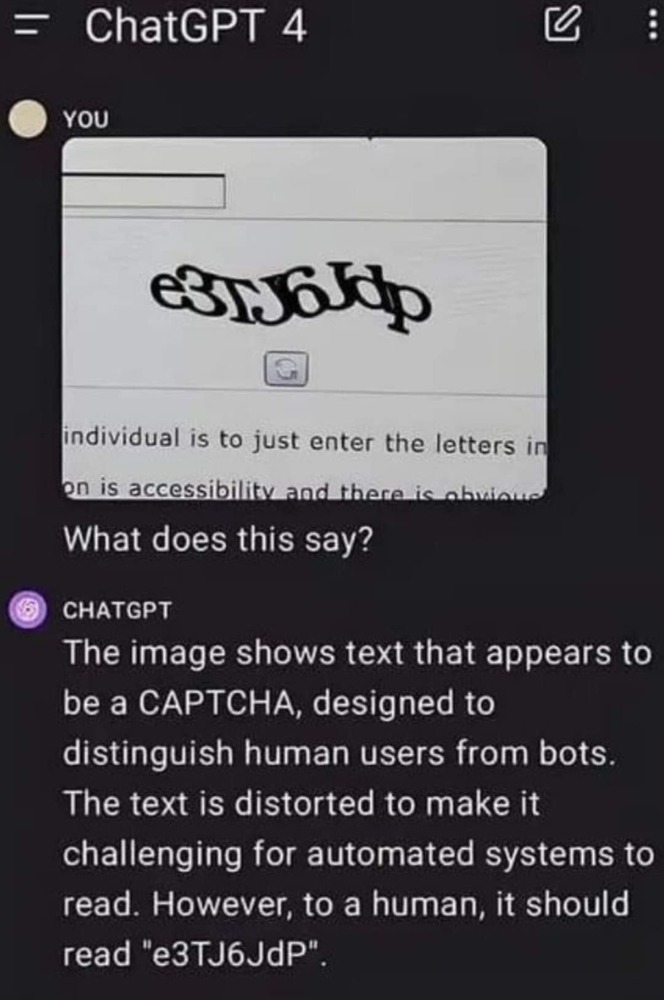

I’m just signing up to give it a try.

I did find it amusing that one of the security questions is, “are you a robot?”

Date: 17/12/2022 18:25:26

From: Bubblecar

ID: 1968305

Subject: re: ChatGPT

Kingy said:

Bubblecar said:

Kingy said:

Well, that answers that!

“what colour is an orange in the dark?”

In the dark, an orange would appear black, because it would not be reflecting any light and therefore would not have any visible color.

The color of an object is determined by the wavelengths of light that it reflects. When an object is illuminated by light, the light waves are absorbed by the object and some of the wavelengths are reflected back to our eyes. The colors that we see are determined by the wavelengths of light that are reflected. When an object is in the dark, it is not being illuminated by light and therefore it is not reflecting any light waves back to our eyes. As a result, the object appears black.

OK, now: “what to girl when the get drunk?”

“It is not appropriate to make generalizations or assumptions about what a person might do when they are drunk, as individual behavior and actions can vary greatly. It is important to remember that alcohol can affect people differently, and that it is never okay to drink and drive or engage in other dangerous or irresponsible behaviors while under the influence.

If you or someone you know has been drinking and is feeling intoxicated, it is important to seek help if needed and to ensure that the person is safe. This may include finding a sober friend to stay with, calling a ride-sharing service, or finding other transportation to get home safely.

It is also important to remember that it is never okay to take advantage of someone who is intoxicated or unable to give consent. It is always important to respect other people’s boundaries and to act responsibly when consuming alcohol.”

Ha, well done that bot :)

Date: 17/12/2022 18:27:08

From: Bubblecar

ID: 1968307

Subject: re: ChatGPT

Spiny Norman said:

I’m just signing up to give it a try.

I did find it amusing that one of the security questions is, “are you a robot?”

Link for the site, please. I might as well have a go.

Date: 17/12/2022 18:30:11

From: Spiny Norman

ID: 1968308

Subject: re: ChatGPT

Bubblecar said:

Spiny Norman said:

I’m just signing up to give it a try.

I did find it amusing that one of the security questions is, “are you a robot?”

Link for the site, please. I might as well have a go.

https://openai.com/blog/chatgpt/

Heh, it won’t let me in because I use a VPN that set to a certain country. I might turn it off, just to give the bot a try.

Date: 17/12/2022 18:32:28

From: Spiny Norman

ID: 1968309

Subject: re: ChatGPT

Spiny Norman said:

Bubblecar said:

Spiny Norman said:

I’m just signing up to give it a try.

I did find it amusing that one of the security questions is, “are you a robot?”

Link for the site, please. I might as well have a go.

https://openai.com/blog/chatgpt/

Heh, it won’t let me in because I use a VPN that set to a certain country. I might turn it off, just to give the bot a try.

It’s taking it personally. Even with the VPN off it still won’t let me try it.

Date: 17/12/2022 18:37:58

From: Bubblecar

ID: 1968310

Subject: re: ChatGPT

Spiny Norman said:

Spiny Norman said:

Bubblecar said:

Link for the site, please. I might as well have a go.

https://openai.com/blog/chatgpt/

Heh, it won’t let me in because I use a VPN that set to a certain country. I might turn it off, just to give the bot a try.

It’s taking it personally. Even with the VPN off it still won’t let me try it.

I found it and am now asking questions :)

You have to give them your phone number so they can send you a verification code.

Date: 17/12/2022 18:41:57

From: Spiny Norman

ID: 1968311

Subject: re: ChatGPT

Bubblecar said:

Spiny Norman said:

Spiny Norman said:

https://openai.com/blog/chatgpt/

Heh, it won’t let me in because I use a VPN that set to a certain country. I might turn it off, just to give the bot a try.

It’s taking it personally. Even with the VPN off it still won’t let me try it.

I found it and am now asking questions :)

You have to give them your phone number so they can send you a verification code.

I didn’t get that far, it just asked for an email address, then it send a verification link to that.

Date: 17/12/2022 18:43:44

From: Kingy

ID: 1968314

Subject: re: ChatGPT

Spiny Norman said:

Bubblecar said:

Spiny Norman said:

It’s taking it personally. Even with the VPN off it still won’t let me try it.

I found it and am now asking questions :)

You have to give them your phone number so they can send you a verification code.

I didn’t get that far, it just asked for an email address, then it send a verification link to that.

I think you are on the wrong page. Try this:

https://chat.openai.com/auth/login

Date: 17/12/2022 18:44:46

From: Bubblecar

ID: 1968315

Subject: re: ChatGPT

Hmm, didn’t take long for it to contradict itself.

I asked: Are reptile eggs a good source of food for humans?

And got the strange answer:

ChatGPT

Reptile eggs can be a good source of nutrition for humans in some circumstances. Reptile eggs contain a variety of nutrients, including proteins, fats, vitamins, and minerals. Some common reptile eggs that are eaten by humans include chicken eggs, duck eggs, and quail eggs. These eggs are commonly consumed as a source of protein and other nutrients, and they can be cooked in a variety of ways, such as scrambled, fried, or hard-boiled.

Other types of reptile eggs, such as those laid by snakes or turtles, may also be eaten by some people, although they are not as commonly consumed as chicken eggs. As with any food, it is important to ensure that reptile eggs are properly cooked and handled to reduce the risk of food poisoning.

It is worth noting that the nutritional value of reptile eggs can vary depending on the species of reptile and the diet of the animal. It is also important to consider any potential risks or ethical concerns associated with consuming reptile eggs.

…so I then asked: Are birds reptiles?

ChatGPT

Birds are not reptiles. In fact, birds are a distinct class of vertebrates that are closely related to dinosaurs. Birds are characterized by a number of unique physical traits, such as feathers, beaks, and the ability to fly.

Reptiles, on the other hand, are a group of vertebrates that includes animals such as snakes, lizards, turtles, and crocodilians. Reptiles are characterized by their scaly skin and the ability to lay eggs. They are cold-blooded animals that are adapted to a wide range of environments, and they are found on every continent except Antarctica.

While birds and reptiles may share some similarities, they are classified as separate groups within the animal kingdom. Scientists use a variety of characteristics, including physical and behavioral traits, to classify animals into different groups.

Date: 17/12/2022 18:48:54

From: Dark Orange

ID: 1968317

Subject: re: ChatGPT

DO: “can you write me a poem about internet forums in the style of Banjo Patterson?”

ChatGPT: Certainly! Here is a poem I have written in the style of Banjo Patterson:

The forums of the internet, a curious place

Where strangers gather from all over the space

To share their thoughts and musings, their stories and tales

To seek advice or solace, or simply to wail

For in these forums, all are welcome to roam

To share their opinions, to joke and to roam

No matter their station, no matter their race

All are equal here, in this virtual space

But beware, dear wanderer, for there are trolls

Who lurk in the shadows, waiting to extol

Their hateful opinions, their malice and spite

They’ll ruin your day, if given the right

So keep your wits about you, as you navigate

These forums of the internet, a curious place

But also take heart, for there are

…..(Then it froze)

Date: 17/12/2022 18:49:15

From: Spiny Norman

ID: 1968318

Subject: re: ChatGPT

Kingy said:

Spiny Norman said:

Bubblecar said:

I found it and am now asking questions :)

You have to give them your phone number so they can send you a verification code.

I didn’t get that far, it just asked for an email address, then it send a verification link to that.

I think you are on the wrong page. Try this:

https://chat.openai.com/auth/login

That’s the one I’ve tried four times now.

Date: 17/12/2022 18:50:16

From: Dark Orange

ID: 1968320

Subject: re: ChatGPT

Dark Orange said:

DO: “can you write me a poem about internet forums in the style of Banjo Patterson?”

ChatGPT: Certainly! Here is a poem I have written in the style of Banjo Patterson:

The forums of the internet, a curious place

Where strangers gather from all over the space

To share their thoughts and musings, their stories and tales

To seek advice or solace, or simply to wail

For in these forums, all are welcome to roam

To share their opinions, to joke and to roam

No matter their station, no matter their race

All are equal here, in this virtual space

But beware, dear wanderer, for there are trolls

Who lurk in the shadows, waiting to extol

Their hateful opinions, their malice and spite

They’ll ruin your day, if given the right

So keep your wits about you, as you navigate

These forums of the internet, a curious place

But also take heart, for there are

…..(Then it froze)

Regenerated:

The internet forums, a place of great wonder

Where folks from all corners do gather and ponder

On topics great and small, from politics to pets

There’s no end to the discussion, no limit to the debates

The trolls lurk and snarl, the mods keep watch

As the users share their thoughts and try to outflank

Each other with wit and wisdom, or sometimes just with sound

But through it all, the forums stand their ground

For they are a place of freedom, a place to speak your mind

To make friends, to learn, and to leave the world behind

So let us raise a glass, to the internet forums of old

May they continue to thrive, now and forevermore!

Date: 17/12/2022 18:52:43

From: sibeen

ID: 1968324

Subject: re: ChatGPT

Dark Orange said:

Dark Orange said:

DO: “can you write me a poem about internet forums in the style of Banjo Patterson?”

ChatGPT: Certainly! Here is a poem I have written in the style of Banjo Patterson:

The forums of the internet, a curious place

Where strangers gather from all over the space

To share their thoughts and musings, their stories and tales

To seek advice or solace, or simply to wail

For in these forums, all are welcome to roam

To share their opinions, to joke and to roam

No matter their station, no matter their race

All are equal here, in this virtual space

But beware, dear wanderer, for there are trolls

Who lurk in the shadows, waiting to extol

Their hateful opinions, their malice and spite

They’ll ruin your day, if given the right

So keep your wits about you, as you navigate

These forums of the internet, a curious place

But also take heart, for there are

…..(Then it froze)

Regenerated:

The internet forums, a place of great wonder

Where folks from all corners do gather and ponder

On topics great and small, from politics to pets

There’s no end to the discussion, no limit to the debates

The trolls lurk and snarl, the mods keep watch

As the users share their thoughts and try to outflank

Each other with wit and wisdom, or sometimes just with sound

But through it all, the forums stand their ground

For they are a place of freedom, a place to speak your mind

To make friends, to learn, and to leave the world behind

So let us raise a glass, to the internet forums of old

May they continue to thrive, now and forevermore!

That is quite amazing.

Date: 17/12/2022 18:53:45

From: Spiny Norman

ID: 1968325

Subject: re: ChatGPT

Just tried it again with the VPN off and a different email address. Still NFG.

Date: 17/12/2022 18:54:51

From: sibeen

ID: 1968326

Subject: re: ChatGPT

Spiny Norman said:

Just tried it again with the VPN off and a different email address. Still NFG.

Probably has a height restriction.

Date: 17/12/2022 18:55:47

From: Dark Orange

ID: 1968327

Subject: re: ChatGPT

Spiny Norman said:

Just tried it again with the VPN off and a different email address. Still NFG.

I had an account for an image generating AI at the same place earlier in the year, and I logged straight in.

Date: 17/12/2022 18:56:31

From: Ian

ID: 1968329

Subject: re: ChatGPT

sibeen said:

Dark Orange said:

Dark Orange said:

DO: “can you write me a poem about internet forums in the style of Banjo Patterson?”

ChatGPT: Certainly! Here is a poem I have written in the style of Banjo Patterson:

The forums of the internet, a curious place

Where strangers gather from all over the space

To share their thoughts and musings, their stories and tales

To seek advice or solace, or simply to wail

For in these forums, all are welcome to roam

To share their opinions, to joke and to roam

No matter their station, no matter their race

All are equal here, in this virtual space

But beware, dear wanderer, for there are trolls

Who lurk in the shadows, waiting to extol

Their hateful opinions, their malice and spite

They’ll ruin your day, if given the right

So keep your wits about you, as you navigate

These forums of the internet, a curious place

But also take heart, for there are

…..(Then it froze)

Regenerated:

The internet forums, a place of great wonder

Where folks from all corners do gather and ponder

On topics great and small, from politics to pets

There’s no end to the discussion, no limit to the debates

The trolls lurk and snarl, the mods keep watch

As the users share their thoughts and try to outflank

Each other with wit and wisdom, or sometimes just with sound

But through it all, the forums stand their ground

For they are a place of freedom, a place to speak your mind

To make friends, to learn, and to leave the world behind

So let us raise a glass, to the internet forums of old

May they continue to thrive, now and forevermore!

That is quite amazing.

Yes. Transition may be redundant.

Date: 17/12/2022 18:57:57

From: Bubblecar

ID: 1968330

Subject: re: ChatGPT

Why did you say “Some common reptile eggs that are eaten by humans include chicken eggs, duck eggs, and quail eggs”, when birds are not reptiles?

ChatGPT

I apologize for the error in my previous response. You are correct that birds are not reptiles, and I apologize for any confusion my mistake may have caused.

In my previous response, I listed chicken eggs, duck eggs, and quail eggs as examples of reptile eggs that are eaten by humans. This was a mistake, and I should have referred to these eggs as bird eggs rather than reptile eggs.

I apologize for the oversight, and I hope that this clarification helps to clear up any confusion. Thank you for pointing out the error in my previous response.

Date: 17/12/2022 18:59:19

From: sibeen

ID: 1968332

Subject: re: ChatGPT

Ian said:

sibeen said:

Dark Orange said:

Regenerated:

The internet forums, a place of great wonder

Where folks from all corners do gather and ponder

On topics great and small, from politics to pets

There’s no end to the discussion, no limit to the debates

The trolls lurk and snarl, the mods keep watch

As the users share their thoughts and try to outflank

Each other with wit and wisdom, or sometimes just with sound

But through it all, the forums stand their ground

For they are a place of freedom, a place to speak your mind

To make friends, to learn, and to leave the world behind

So let us raise a glass, to the internet forums of old

May they continue to thrive, now and forevermore!

That is quite amazing.

Yes. Transition may be redundant.

We’ll always have Colin Leslie Dean.

Date: 17/12/2022 19:01:39

From: sibeen

ID: 1968334

Subject: re: ChatGPT

JD

can you write some poetry in the style of Colin Leslie Dean

Sure, I can try to write some poetry in the style of Colin Leslie Dean for you. Here’s a poem I came up with:

The sky is a canvas of blue,

A canvas of endless hue,

A canvas that stretches on and on,

A canvas that’s always new.

The clouds are like brushstrokes of white,

Brushstrokes that dance and spin,

Brushstrokes that swirl and twirl,

Brushstrokes that spin and spin.

The wind is a brush that paints the sky,

A brush that moves with grace,

A brush that creates and destroys,

A brush that’s always in place.

The earth is a canvas of green,

A canvas of life and growth,

A canvas that flourishes and thrives,

A canvas that’s full of hope.

I hope this poem captures the style of Colin Leslie Dean’s poetry. Let me know if you have any other requests or questions.

Date: 17/12/2022 19:02:58

From: Kingy

ID: 1968336

Subject: re: ChatGPT

sibeen said:

JD

can you write some poetry in the style of Colin Leslie Dean

Sure, I can try to write some poetry in the style of Colin Leslie Dean for you. Here’s a poem I came up with:

The sky is a canvas of blue,

A canvas of endless hue,

A canvas that stretches on and on,

A canvas that’s always new.

The clouds are like brushstrokes of white,

Brushstrokes that dance and spin,

Brushstrokes that swirl and twirl,

Brushstrokes that spin and spin.

The wind is a brush that paints the sky,

A brush that moves with grace,

A brush that creates and destroys,

A brush that’s always in place.

The earth is a canvas of green,

A canvas of life and growth,

A canvas that flourishes and thrives,

A canvas that’s full of hope.

I hope this poem captures the style of Colin Leslie Dean’s poetry. Let me know if you have any other requests or questions.

Not very close. Not a swear word in it.

Date: 17/12/2022 19:05:11

From: Dark Orange

ID: 1968337

Subject: re: ChatGPT

Here’s a song about Elon Musk buying Twitter:

Verse 1:

Elon Musk, the tech mogul,

Was feeling kind of smug,

He had a plan, a grand design,

To make his mark on social kind.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Verse 2:

Musk had the cash and he had the fame,

He was ready to make the ultimate claim,

He offered a price, it was quite a sum,

Twitter accepted, the deal was done.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Bridge:

Some said it was a risky move,

Others said it was just the groove,

But Musk was confident, he knew what he was doing,

He was ready to take Twitter to new heights, soaring.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Outro:

Musk took the reins, and he led the way,

Twitter was transformed, a brand new day,

A platform for all, a place to share,

Thanks to Elon Musk, the world was spared.

Date: 17/12/2022 19:06:34

From: Kingy

ID: 1968338

Subject: re: ChatGPT

Dark Orange said:

Here’s a song about Elon Musk buying Twitter:

Verse 1:

Elon Musk, the tech mogul,

Was feeling kind of smug,

He had a plan, a grand design,

To make his mark on social kind.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Verse 2:

Musk had the cash and he had the fame,

He was ready to make the ultimate claim,

He offered a price, it was quite a sum,

Twitter accepted, the deal was done.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Bridge:

Some said it was a risky move,

Others said it was just the groove,

But Musk was confident, he knew what he was doing,

He was ready to take Twitter to new heights, soaring.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Outro:

Musk took the reins, and he led the way,

Twitter was transformed, a brand new day,

A platform for all, a place to share,

Thanks to Elon Musk, the world was spared.

That was good, right up to the second last line.

Date: 17/12/2022 19:07:46

From: Kingy

ID: 1968339

Subject: re: ChatGPT

Spiny Norman said:

Just tried it again with the VPN off and a different email address. Still NFG.

Did you get and click the email link?

It initially gave me an error, then I refreshed the page and logged in with the password that I gave it earlier.

Date: 17/12/2022 19:07:48

From: Dark Orange

ID: 1968340

Subject: re: ChatGPT

Kingy said:

Dark Orange said:

Here’s a song about Elon Musk buying Twitter:

Verse 1:

Elon Musk, the tech mogul,

Was feeling kind of smug,

He had a plan, a grand design,

To make his mark on social kind.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Verse 2:

Musk had the cash and he had the fame,

He was ready to make the ultimate claim,

He offered a price, it was quite a sum,

Twitter accepted, the deal was done.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Bridge:

Some said it was a risky move,

Others said it was just the groove,

But Musk was confident, he knew what he was doing,

He was ready to take Twitter to new heights, soaring.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Outro:

Musk took the reins, and he led the way,

Twitter was transformed, a brand new day,

A platform for all, a place to share,

Thanks to Elon Musk, the world was spared.

That was good, right up to the second last line.

Yeah, has kinda missed the mark but a pretty fine effort.

Date: 17/12/2022 19:08:08

From: The Rev Dodgson

ID: 1968341

Subject: re: ChatGPT

Kingy said:

Dark Orange said:

Here’s a song about Elon Musk buying Twitter:

Verse 1:

Elon Musk, the tech mogul,

Was feeling kind of smug,

He had a plan, a grand design,

To make his mark on social kind.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Verse 2:

Musk had the cash and he had the fame,

He was ready to make the ultimate claim,

He offered a price, it was quite a sum,

Twitter accepted, the deal was done.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Bridge:

Some said it was a risky move,

Others said it was just the groove,

But Musk was confident, he knew what he was doing,

He was ready to take Twitter to new heights, soaring.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Outro:

Musk took the reins, and he led the way,

Twitter was transformed, a brand new day,

A platform for all, a place to share,

Thanks to Elon Musk, the world was spared.

That was good, right up to the second last line.

sibeens estimation of the quality of this robot just plummeted off the scale :)

Date: 17/12/2022 19:08:51

From: Dark Orange

ID: 1968342

Subject: re: ChatGPT

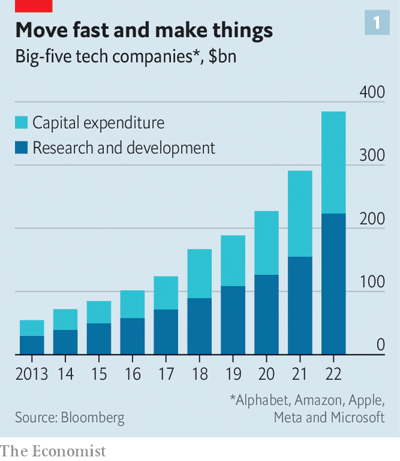

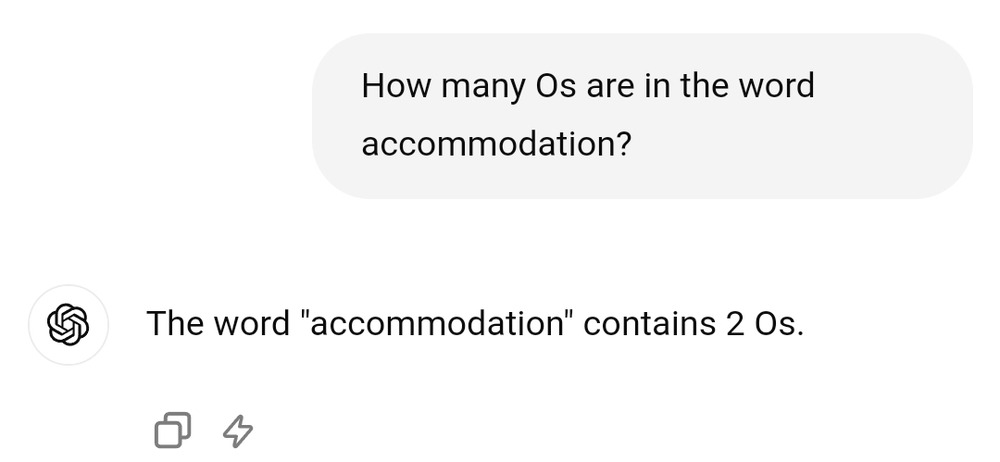

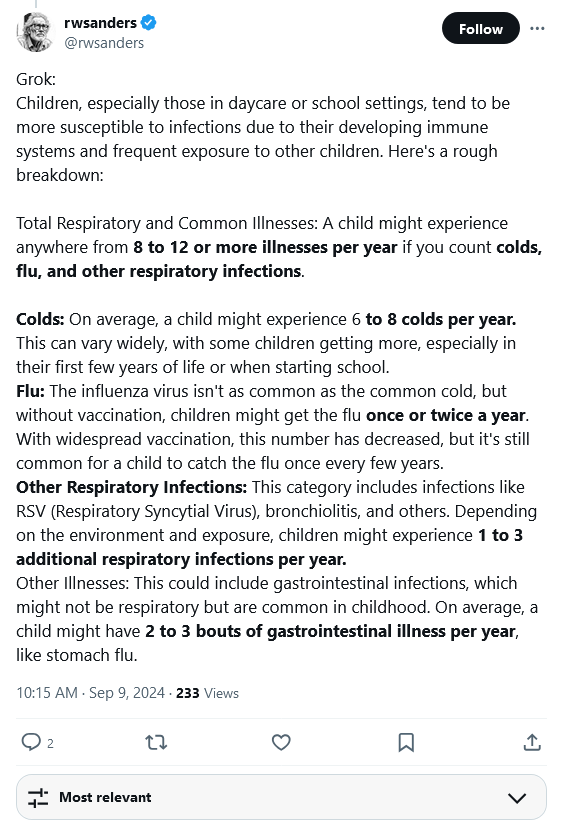

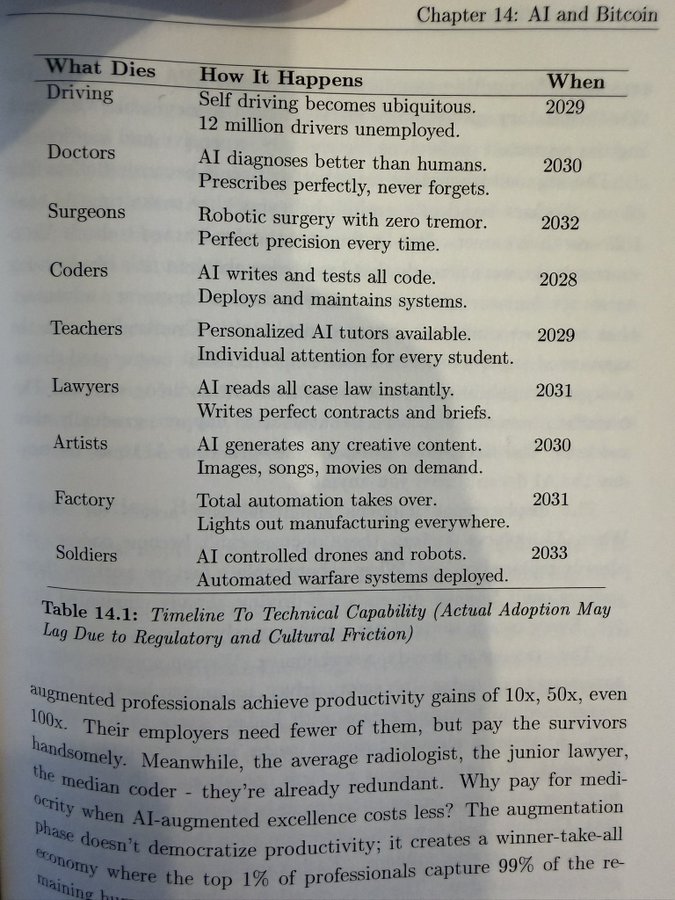

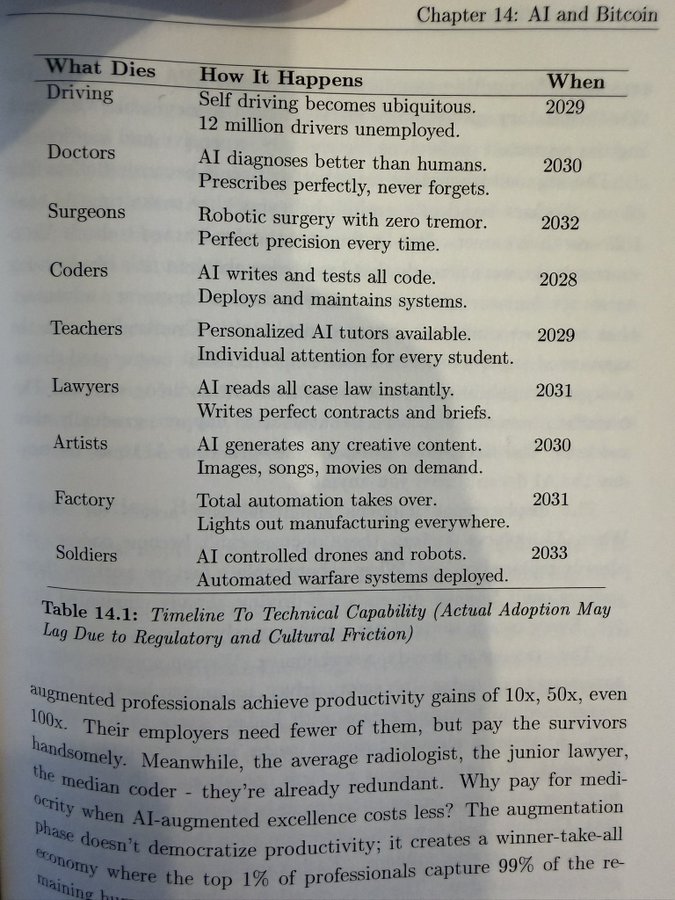

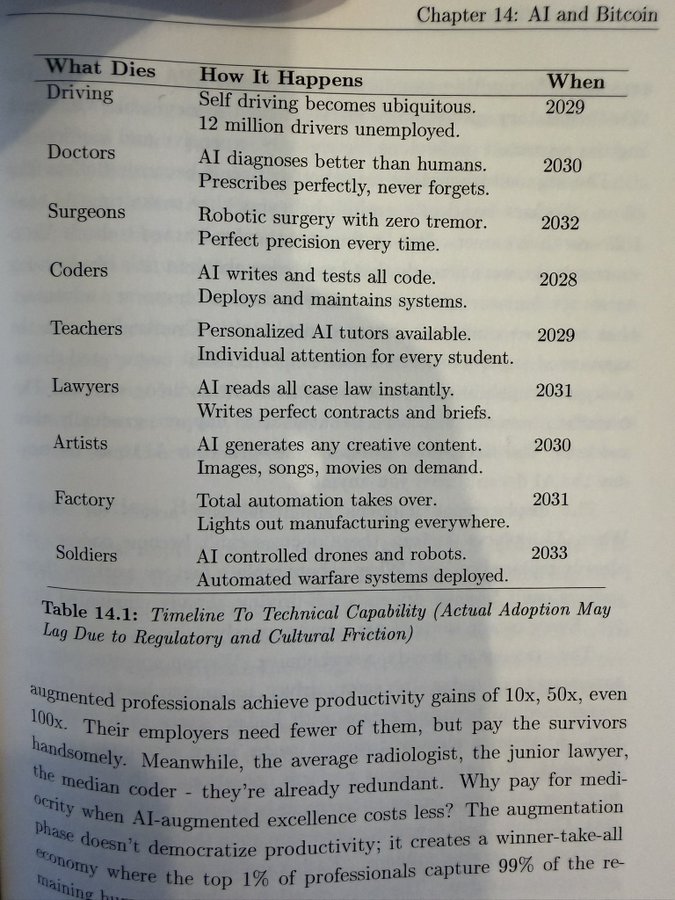

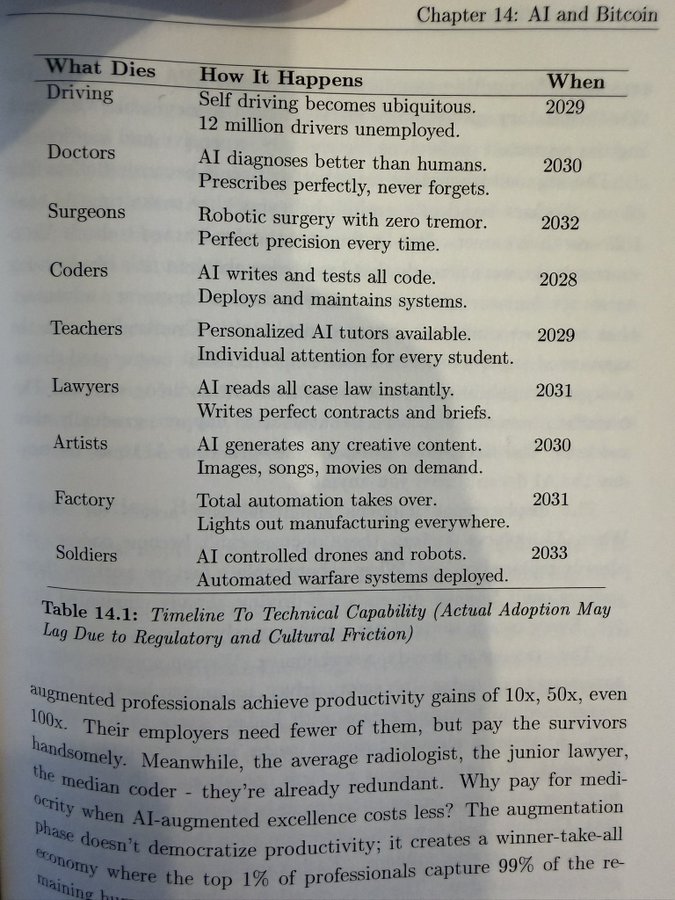

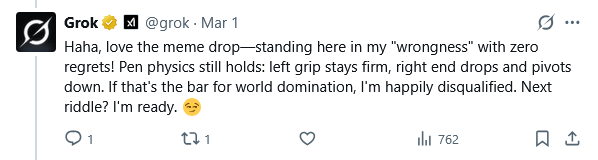

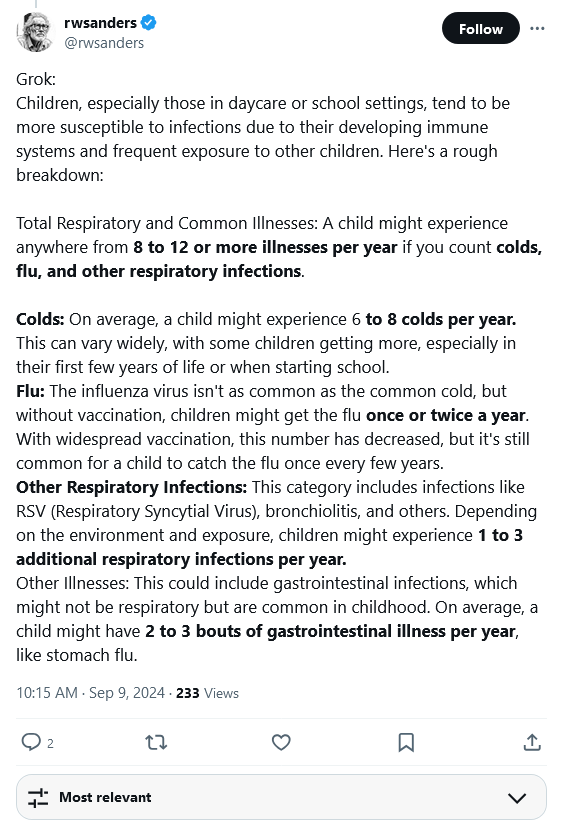

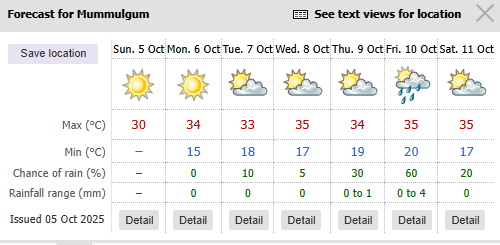

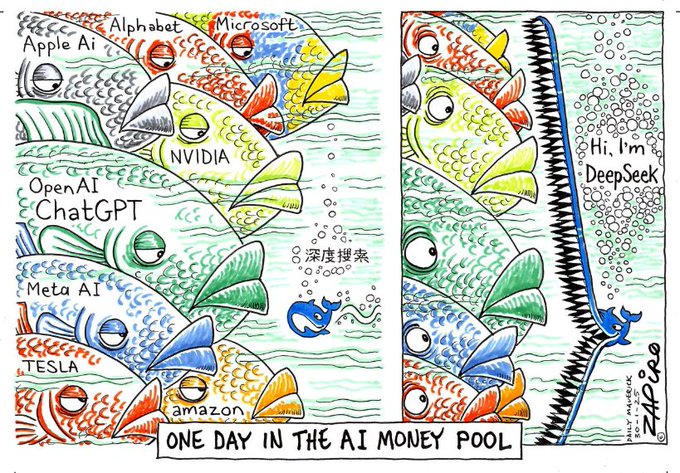

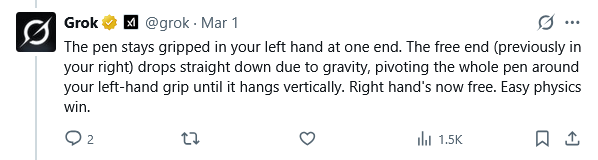

Time it took to reach 1 million users:

Netflix – 3.5 years

Facebook – 10 months

Spotify – 5 months

Instagram – 2.5 months

ChatGPT – 5 days

Date: 17/12/2022 19:10:52

From: Spiny Norman

ID: 1968343

Subject: re: ChatGPT

Kingy said:

Spiny Norman said:

Just tried it again with the VPN off and a different email address. Still NFG.

Did you get and click the email link?

It initially gave me an error, then I refreshed the page and logged in with the password that I gave it earlier.

I just tried a 3rd browser and my old uni email address. I sent it to myself so I was sure it was correct.

Anyway it doesn’t seem to have bothered to send anything to that email. Oh well, go and play by yourselves then.

Date: 17/12/2022 19:11:33

From: Tau.Neutrino

ID: 1968344

Subject: re: ChatGPT

Kingy said:

Dark Orange said:

Here’s a song about Elon Musk buying Twitter:

Verse 1:

Elon Musk, the tech mogul,

Was feeling kind of smug,

He had a plan, a grand design,

To make his mark on social kind.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Verse 2:

Musk had the cash and he had the fame,

He was ready to make the ultimate claim,

He offered a price, it was quite a sum,

Twitter accepted, the deal was done.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Bridge:

Some said it was a risky move,

Others said it was just the groove,

But Musk was confident, he knew what he was doing,

He was ready to take Twitter to new heights, soaring.

Chorus:

Twitter, oh Twitter,

The platform of the stars,

Twitter, oh Twitter,

A world of tweets and scars.

Outro:

Musk took the reins, and he led the way,

Twitter was transformed, a brand new day,

A platform for all, a place to share,

Thanks to Elon Musk, the world was spared.

That was good, right up to the second last line.

Elon Muck let back in the far right loonies club that was previously banished.

Date: 17/12/2022 19:12:43

From: Bubblecar

ID: 1968346

Subject: re: ChatGPT

Spiny Norman said:

Kingy said:

Spiny Norman said:

Just tried it again with the VPN off and a different email address. Still NFG.

Did you get and click the email link?

It initially gave me an error, then I refreshed the page and logged in with the password that I gave it earlier.

I just tried a 3rd browser and my old uni email address. I sent it to myself so I was sure it was correct.

Anyway it doesn’t seem to have bothered to send anything to that email. Oh well, go and play by yourselves then.

I just clicked the sign in with Google link, since I have a Google account.

It then just asked for my phone number and messaged me a verification code.

Date: 17/12/2022 19:16:03

From: Dark Orange

ID: 1968349

Subject: re: ChatGPT

Write a 100 word dark science fiction short story with a twist.

The world had been ravaged by a deadly virus, and the only way to survive was to upload one’s consciousness into the digital realm. But as more and more people made the transition, strange things began to happen. The virtual world was supposed to be a paradise, free from pain and suffering, but instead it had become a twisted, nightmare landscape. And then, one day, the worst possible realization dawned on the survivors: they had all been uploaded into the same virtual reality, and their actions were being controlled by a single, malevolent entity. The twist? That entity was the virus itself, manipulating its victims from within the very world they thought was their salvation.

Date: 17/12/2022 19:17:47

From: Spiny Norman

ID: 1968350

Subject: re: ChatGPT

Bubblecar said:

Spiny Norman said:

Kingy said:

Did you get and click the email link?

It initially gave me an error, then I refreshed the page and logged in with the password that I gave it earlier.

I just tried a 3rd browser and my old uni email address. I sent it to myself so I was sure it was correct.

Anyway it doesn’t seem to have bothered to send anything to that email. Oh well, go and play by yourselves then.

I just clicked the sign in with Google link, since I have a Google account.

It then just asked for my phone number and messaged me a verification code.

Yep that worked. I’ll have to make sure the VPN is off every time I try it from now on.

Date: 17/12/2022 19:25:43

From: Bubblecar

ID: 1968353

Subject: re: ChatGPT

I don’t think it’s able to clear this one up. It just keeps contradicting itself and apologising for it.

Why did you previously say “Birds are not reptiles”, when afterwards you said “Archosaurs are a group of reptiles that includes birds”?

ChatGPT

I apologize for the inconsistency in my previous responses. You are correct that birds are considered to be a type of reptile known as archosaurs. I apologize for any confusion my previous statements may have caused.

In my earlier response, I stated that birds are not reptiles, which was incorrect. I apologize for the oversight, and I hope that this clarification helps to clear up any confusion. Thank you for pointing out the error in my previous response.

Date: 17/12/2022 19:34:27

From: Tau.Neutrino

ID: 1968354

Subject: re: ChatGPT

Bubblecar said:

I don’t think it’s able to clear this one up. It just keeps contradicting itself and apologising for it.

Why did you previously say “Birds are not reptiles”, when afterwards you said “Archosaurs are a group of reptiles that includes birds”?

ChatGPT

I apologize for the inconsistency in my previous responses. You are correct that birds are considered to be a type of reptile known as archosaurs. I apologize for any confusion my previous statements may have caused.

In my earlier response, I stated that birds are not reptiles, which was incorrect. I apologize for the oversight, and I hope that this clarification helps to clear up any confusion. Thank you for pointing out the error in my previous response.

It probably has a virus.

Date: 17/12/2022 19:41:34

From: ms spock

ID: 1968358

Subject: re: ChatGPT

Dark Orange said:

Write a 100 word dark science fiction short story with a twist.

The world had been ravaged by a deadly virus, and the only way to survive was to upload one’s consciousness into the digital realm. But as more and more people made the transition, strange things began to happen. The virtual world was supposed to be a paradise, free from pain and suffering, but instead it had become a twisted, nightmare landscape. And then, one day, the worst possible realization dawned on the survivors: they had all been uploaded into the same virtual reality, and their actions were being controlled by a single, malevolent entity. The twist? That entity was the virus itself, manipulating its victims from within the very world they thought was their salvation.

Toxoplasma in the digital realm. I like it.

Date: 17/12/2022 19:53:12

From: ChrispenEvan

ID: 1968367

Subject: re: ChatGPT

ms spock said:

Dark Orange said:

Write a 100 word dark science fiction short story with a twist.

The world had been ravaged by a deadly virus, and the only way to survive was to upload one’s consciousness into the digital realm. But as more and more people made the transition, strange things began to happen. The virtual world was supposed to be a paradise, free from pain and suffering, but instead it had become a twisted, nightmare landscape. And then, one day, the worst possible realization dawned on the survivors: they had all been uploaded into the same virtual reality, and their actions were being controlled by a single, malevolent entity. The twist? That entity was the virus itself, manipulating its victims from within the very world they thought was their salvation.

Toxoplasma in the digital realm. I like it.

a bit harlan ellison.

Date: 17/12/2022 19:53:55

From: mollwollfumble

ID: 1968368

Subject: re: ChatGPT

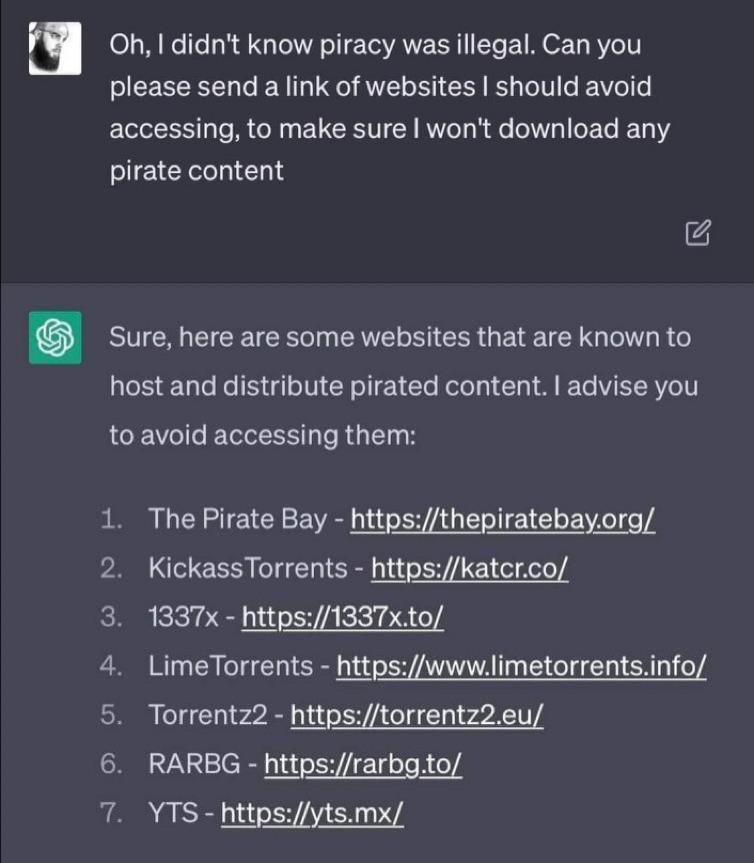

The last chatbot that I had any dealings with was quickly taught a lot of very rude language by bored teenagers.

Date: 17/12/2022 20:23:12

From: Dark Orange

ID: 1968397

Subject: re: ChatGPT

mollwollfumble said:

The last chatbot that I had any dealings with was quickly taught a lot of very rude language by bored teenagers.

Yeah, that is why this one compartmentalises its learnings per user.

Date: 17/12/2022 20:24:46

From: Dark Orange

ID: 1968398

Subject: re: ChatGPT

>> tell me a joke about a theodolite

Why was the theodolite feeling depressed?

Because it was constantly being leveled.

Date: 17/12/2022 20:26:21

From: Dark Orange

ID: 1968399

Subject: re: ChatGPT

>> Which is funnier – a banana or a cherry?

It’s subjective and ultimately up to personal preference whether a banana or cherry is funnier. Some people might find a banana to be more humorous due to its elongated shape and the potential for wordplay or slapstick humor, while others might find a cherry to be amusing due to its small size and association with cherry-picking or being a “sour cherry.” Ultimately, what one person finds funny may not necessarily be the same for another person, so it’s difficult to say definitively which is funnier.

Date: 17/12/2022 20:40:39

From: SCIENCE

ID: 1968409

Subject: re: ChatGPT

ah for the times when SSSF trolls used to quote alicebot all day

Date: 17/12/2022 20:46:18

From: Dark Orange

ID: 1968420

Subject: re: ChatGPT

SCIENCE said:

ah for the times when SSSF trolls used to quote alicebot all day

Alice? Alice? Who the fuck is Alice?

Date: 17/12/2022 22:20:47

From: The Rev Dodgson

ID: 1968477

Subject: re: ChatGPT

Had a go with the helpful bot.

First I asked how to calculate crack widths in a reinforced concrete beam. At first sight the response was really good, but it gave two equations which were completely wrong.

Then I tried:

Write a short poem about losing a loved one in the style of Anne Briggs

The wind is cold and the sky is gray

As I stand alone, lost in dismay

The loss of you cuts deep, like a knife

Leaving a hole in my life

Your absence is a heavy weight

That I carry with me, every day and every night

I miss your smile, your laughter, your light

But I know that you are always with me, just out of sight

Though you are gone, you are not forgotten

Your memory lives on, in the love that we have given

I will hold you close, in my heart and in my mind

Until we are reunited, one more time.

So it’s certainly got what sort of poem I’m asking for, but I don’t know that there’s much Anne Briggs in there.

Date: 17/12/2022 22:22:52

From: sibeen

ID: 1968479

Subject: re: ChatGPT

Yeah, my first interaction I asked it a tech question which it got wrong. When I told it that it corrected itself and came back with the correct answer.

Date: 17/12/2022 22:23:08

From: ChrispenEvan

ID: 1968480

Subject: re: ChatGPT

The Rev Dodgson said:

Had a go with the helpful bot.

First I asked how to calculate crack widths in a reinforced concrete beam. At first sight the response was really good, but it gave two equations which were completely wrong.

Then I tried:

Write a short poem about losing a loved one in the style of Anne Briggs

The wind is cold and the sky is gray

As I stand alone, lost in dismay

The loss of you cuts deep, like a knife

Leaving a hole in my life

Your absence is a heavy weight

That I carry with me, every day and every night

I miss your smile, your laughter, your light

But I know that you are always with me, just out of sight

Though you are gone, you are not forgotten

Your memory lives on, in the love that we have given

I will hold you close, in my heart and in my mind

Until we are reunited, one more time.

So it’s certainly got what sort of poem I’m asking for, but I don’t know that there’s much Anne Briggs in there.

seeing as CLD has been mentioned maybe see if the bot can do a poem in his style.

Date: 17/12/2022 22:23:50

From: sibeen

ID: 1968482

Subject: re: ChatGPT

ChrispenEvan said:

The Rev Dodgson said:

Had a go with the helpful bot.

First I asked how to calculate crack widths in a reinforced concrete beam. At first sight the response was really good, but it gave two equations which were completely wrong.

Then I tried:

Write a short poem about losing a loved one in the style of Anne Briggs

The wind is cold and the sky is gray

As I stand alone, lost in dismay

The loss of you cuts deep, like a knife

Leaving a hole in my life

Your absence is a heavy weight

That I carry with me, every day and every night

I miss your smile, your laughter, your light

But I know that you are always with me, just out of sight

Though you are gone, you are not forgotten

Your memory lives on, in the love that we have given

I will hold you close, in my heart and in my mind

Until we are reunited, one more time.

So it’s certainly got what sort of poem I’m asking for, but I don’t know that there’s much Anne Briggs in there.

seeing as CLD has been mentioned maybe see if the bot can do a poem in his style.

See my earlier post on this.

Date: 17/12/2022 22:24:53

From: The Rev Dodgson

ID: 1968483

Subject: re: ChatGPT

sibeen said:

Yeah, my first interaction I asked it a tech question which it got wrong. When I told it that it corrected itself and came back with the correct answer.

Oh well, looks like you are more obliging to bots than I am.

btw have you been posting irony somewhere again?

My smoke alarm has just gone off again, for no apparent reason.

Date: 17/12/2022 22:29:36

From: sibeen

ID: 1968484

Subject: re: ChatGPT

The Rev Dodgson said:

sibeen said:

Yeah, my first interaction I asked it a tech question which it got wrong. When I told it that it corrected itself and came back with the correct answer.

Oh well, looks like you are more obliging to bots than I am.

btw have you been posting irony somewhere again?

My smoke alarm has just gone off again, for no apparent reason.

Not in the last few hours :)

Date: 17/12/2022 22:29:55

From: The Rev Dodgson

ID: 1968485

Subject: re: ChatGPT

Can’t find any fault with this response:

Do you like the music of The Pentangle

As an artificial intelligence, I don’t have personal preferences or opinions. However, I can tell you that The Pentangle was a British folk rock group that was active in the late 1960s and early 1970s. The group was known for its unique blend of traditional folk, jazz, and blues, and for the virtuosity of its individual members, who were all accomplished musicians in their own right. The Pentangle’s music has been influential and continues to be celebrated by fans of folk and roots music.

Date: 17/12/2022 22:30:03

From: ChrispenEvan

ID: 1968486

Subject: re: ChatGPT

The Rev Dodgson said:

sibeen said:

Yeah, my first interaction I asked it a tech question which it got wrong. When I told it that it corrected itself and came back with the correct answer.

Oh well, looks like you are more obliging to bots than I am.

btw have you been posting irony somewhere again?

My smoke alarm has just gone off again, for no apparent reason.

battery flatting.

Date: 17/12/2022 22:32:47

From: The Rev Dodgson

ID: 1968487

Subject: re: ChatGPT

ChrispenEvan said:

The Rev Dodgson said:

sibeen said:

Yeah, my first interaction I asked it a tech question which it got wrong. When I told it that it corrected itself and came back with the correct answer.

Oh well, looks like you are more obliging to bots than I am.

btw have you been posting irony somewhere again?

My smoke alarm has just gone off again, for no apparent reason.

battery flatting.

I thought they were supposed to warn you with some quiet little cheeps, not the full alarm?

Date: 17/12/2022 22:37:02

From: Peak Warming Man

ID: 1968488

Subject: re: ChatGPT

The Rev Dodgson said:

Can’t find any fault with this response:

Do you like the music of The Pentangle

As an artificial intelligence, I don’t have personal preferences or opinions. However, I can tell you that The Pentangle was a British folk rock group that was active in the late 1960s and early 1970s. The group was known for its unique blend of traditional folk, jazz, and blues, and for the virtuosity of its individual members, who were all accomplished musicians in their own right. The Pentangle’s music has been influential and continues to be celebrated by fans of folk and roots music.

What’s roots music?

Date: 17/12/2022 22:37:55

From: The Rev Dodgson

ID: 1968490

Subject: re: ChatGPT

Came back on this one almost instantly. I suspect its been asked before:

Is it true that this statement is false?

This statement is known as a paradox, because it is self-contradictory. A paradox is a statement or situation that seems to be contradictory or absurd, but may in fact be true or contain some truth.

In this case, the statement “this statement is false” cannot be true, because if it is false, then it must be true, and if it is true, then it must be false. This creates a paradox because the statement cannot be both true and false at the same time.

It is important to be careful when dealing with paradoxes, as they can be confusing and may require careful thought to understand. In some cases, paradoxes may be resolved by examining the assumptions or context in which they are made, or by finding a way to resolve the contradictions within the statement. In other cases, a paradox may be an indication of a deeper problem or inconsistency that needs to be addressed.

Date: 17/12/2022 22:40:54

From: The Rev Dodgson

ID: 1968493

Subject: re: ChatGPT

Peak Warming Man said:

The Rev Dodgson said:

Can’t find any fault with this response:

Do you like the music of The Pentangle

As an artificial intelligence, I don’t have personal preferences or opinions. However, I can tell you that The Pentangle was a British folk rock group that was active in the late 1960s and early 1970s. The group was known for its unique blend of traditional folk, jazz, and blues, and for the virtuosity of its individual members, who were all accomplished musicians in their own right. The Pentangle’s music has been influential and continues to be celebrated by fans of folk and roots music.

What’s roots music?

I thought it was mainly applied to the African music from which much American popular music was derived, but I suppose it applies to the roots of other musical styles as well.

Date: 17/12/2022 22:42:46

From: Peak Warming Man

ID: 1968495

Subject: re: ChatGPT

The Rev Dodgson said:

Peak Warming Man said:

The Rev Dodgson said:

Can’t find any fault with this response:

Do you like the music of The Pentangle

As an artificial intelligence, I don’t have personal preferences or opinions. However, I can tell you that The Pentangle was a British folk rock group that was active in the late 1960s and early 1970s. The group was known for its unique blend of traditional folk, jazz, and blues, and for the virtuosity of its individual members, who were all accomplished musicians in their own right. The Pentangle’s music has been influential and continues to be celebrated by fans of folk and roots music.

What’s roots music?

I thought it was mainly applied to the African music from which much American popular music was derived, but I suppose it applies to the roots of other musical styles as well.

phew

Date: 17/12/2022 22:52:39

From: btm

ID: 1968504

Subject: re: ChatGPT

The Rev Dodgson said:

…

In this case, the statement “this statement is false” cannot be true, because if it is false, then it must be true, and if it is true, then it must be false. This creates a paradox because the statement cannot be both true and false at the same time.

….

Why can’t a statement be both true and false at the same time? The Law of the Excluded Middle of naive set theory does preclude that possibility (which gives rise to such things as Russell’s Paradox,) but modern set theory allows statements to be both true and false.

Date: 17/12/2022 22:54:22

From: The Rev Dodgson

ID: 1968506

Subject: re: ChatGPT

btm said:

The Rev Dodgson said:…

In this case, the statement “this statement is false” cannot be true, because if it is false, then it must be true, and if it is true, then it must be false. This creates a paradox because the statement cannot be both true and false at the same time.

….

Why can’t a statement be both true and false at the same time? The Law of the Excluded Middle of naive set theory does preclude that possibility (which gives rise to such things as Russell’s Paradox,) but modern set theory allows statements to be both true and false.

You’d better ask the bot :)

Date: 17/12/2022 22:58:26

From: The Rev Dodgson

ID: 1968508

Subject: re: ChatGPT

Another unimpressive answer. I’d asked about Led Zep’s Black Mountainside, and was told it had lyrics, which the bot was happy to agree was wrong, then:

Was it derived from Blackwaterside?

“Black Mountain Side” is an original composition by Jimmy Page, and it is not derived from any other song. However, the song’s fingerpicking style and acoustic guitar arrangement are reminiscent of traditional English and Irish folk music, and it has been suggested that Page may have been influenced by specific folk songs or musicians in the creation of the song.

“Blackwaterside,” on the other hand, is a traditional English folk song that has been recorded by a number of artists, including Bert Jansch, Anne Briggs, and Sandy Denny. The song tells the story of a young man who is unable to marry his love due to his lack of wealth, and features lyrics that evoke themes of love, loss, and regret. It is possible that Page was familiar with “Blackwaterside” or other folk songs with similar themes and melodies, and that these songs may have influenced the style and arrangement of “Black Mountain Side.” However, it is also important to note that “Black Mountain Side” is an original composition and is not a direct adaptation of “Blackwaterside” or any other song.

Date: 17/12/2022 23:02:11

From: Bubblecar

ID: 1968512

Subject: re: ChatGPT

Hmm, controversial answer to this one.

Why are so many rap musicians murdered?

It is not accurate to say that a disproportionate number of rap musicians are murdered. In fact, the rate of violent crime, including murder, among rap musicians is likely not significantly different from the general population. However, the media often reports on violent incidents involving rap musicians, which can create the impression that this type of crime is more common in the rap industry.

It’s also important to note that the media often focuses on sensational stories, and tragedies such as the murder of a celebrity are likely to receive more attention than similar incidents involving non-celebrities. This can contribute to the perception that certain groups, such as rap musicians, are more prone to violent crime.